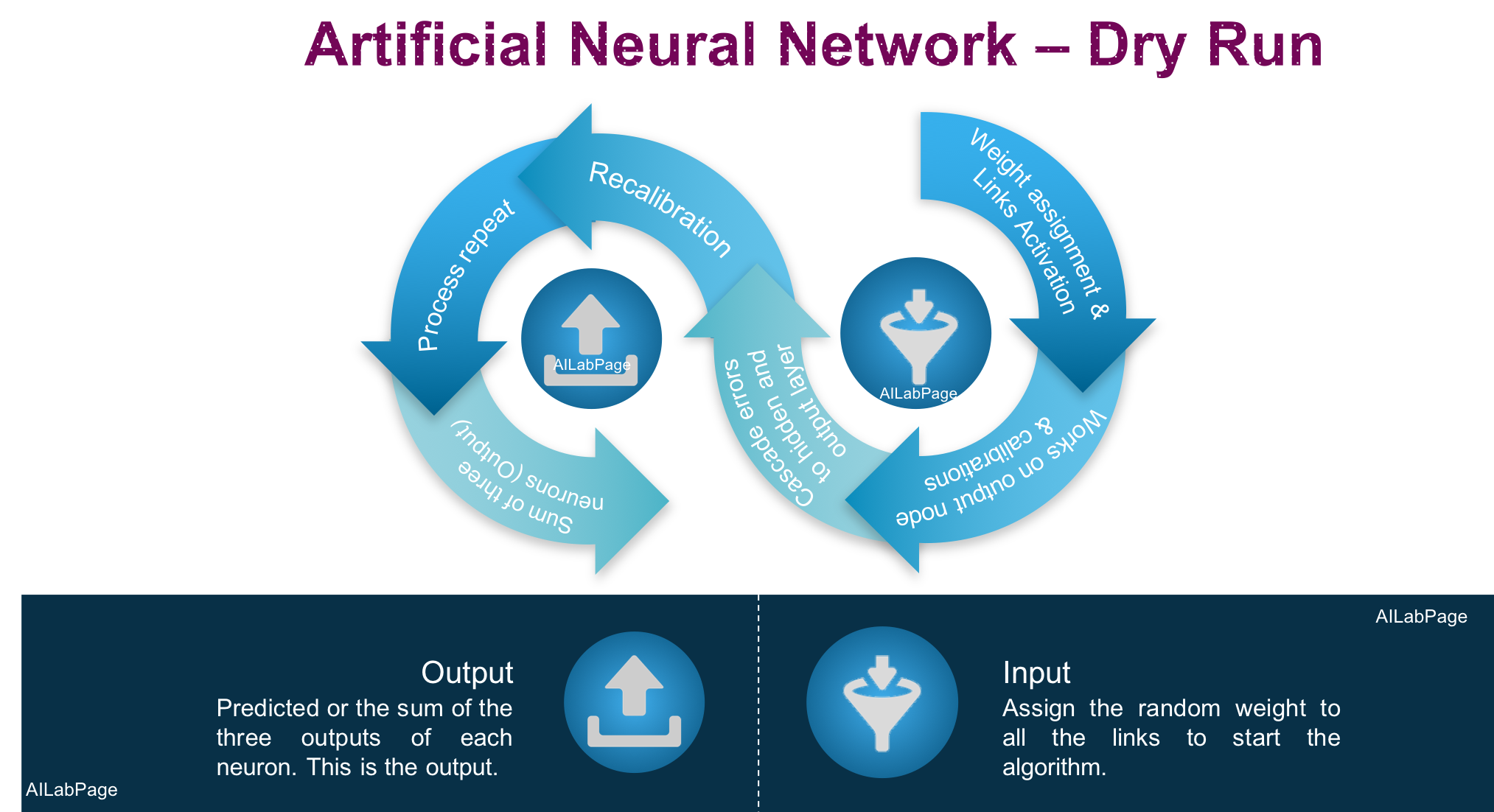

Neuroevolution: Enhancing Neural Networks through Evolutionary Algorithms

This innovative approach enables the creation of more efficient and adaptive neural networks, promoting advancements in machine learning and artificial intelligence. By leveraging evolutionary algorithms, neuroevolution seeks to achieve superior…