Recurrent Neural Networks – RNNs is a type of deep learning model designed to process sequences — text, speech, time series, anything where order matters. Unlike feedforward networks that treat inputs independently, RNNs carry a hidden state that acts as memory, letting the network condition its output on everything it’s seen so far. This is what lets your phone guess what you’re typing, what enabled the first generation of machine translation, and what eventually pushed the field toward LSTMs, GRUs, and — in 2017 — the Transformer architecture that now dominates most sequence tasks. This post explains the full picture: how RNNs work, the vanishing gradient problem, LSTM and GRU variants, BPTT training, and when you should (and shouldn’t) still reach for an RNN.

So, what’s the deal with RNNs? Why are they cooler than your average neural network? Well, regular neural networks are like goldfish—they see every input as a brand-new thing, completely forgetting what came before. But RNNs? They’ve got memory. Like that one friend who still brings up that time you tripped in front of your crush in 8th grade (thanks, Kavita), RNNs remember stuff. They have these loops that keep past information alive, so they can spot patterns over time. It’s like they’re saying, “Hey, I’ve seen this before. Let me help you out.”

This memory superpower makes RNNs the MVPs of anything involving sequences—speech recognition, language translation, even generating music that doesn’t sound like a robot’s existential crisis. Instead of starting from scratch every time, they reuse what they’ve already learned, making smarter, more context-aware decisions. It’s like they’re the AI version of, “Oh, you’re talking about that again? Let me save you some time.”

Now, if you’re here for a deep dive into the math behind RNNs, I’m gonna stop you right there. This isn’t that kind of party. No brain-melting formulas, no PhD-level jargon—just a chill, relatable chat about how these things work. If you finish reading this and think, “Okay, I kinda get it now,” then my job here is done.

As per the experience from AILabPage’s lab sessions, we can conclude and say – One lesser-known fact about Recurrent Neural Networks is their ability to perform dynamic computation on sequences of data, allowing them to capture temporal dependencies effectively.

- 1. Artificial Neural Networks – What Is It

- 2. Recurrent Neural Networks – Outlook

- 3. Understanding the Need for Sequential Data Processing

- 4. How RNNs Differ from Traditional Neural Networks

- 5. Real-World Applications of RNNs

- 6. Fundamentals of Recurrent Neural Networks (RNNs)

- 7. The Concept of Memory in Neural Networks

- 8. Structure and Architecture of an RNN

- 9. Forward and Backward Pass in RNNs

- 10. RNNs in Code: A Minimal PyTorch Walkthrough

- 11. Supervised or Unsupervised

- 12. Recurrent Neural Network – Architecture

- 13. Recurrent Neural Network Variants

- 14. Solving Complex Problems with Sequential Data

- 15. Recurrent Neural Networks & Sequence Data

- 16. Use cases – Recurrent Neural Networks

- 17. Vanishing and Exploding Gradient Problem

- 18. Backpropagation in a Recurrent Neural Network (BPTT)

- 19. GRUs and RNNs

- 20. LSTM and RNNs

- 21. Sequential Memory

- 22. RNN vs LSTM vs Transformer: When to Use What

- 23. Eight Things People Get Wrong About RNNs

- 24. Test Yourself: Five RNN Questions

- 25. Frequently Asked Questions *(stays at the bottom — already indexed)*

- 26. Conclusion

Artificial Neural Networks – What Is It

Back in 1943, McCulloch and Pitts had a wild idea: “What if machines could think like brains?” They built the first neural network—super basic, but a start. Fast forward, and we now have Recurrent Neural Networks (RNNs), the tech behind your phone’s eerily good text predictions.

Unlike regular artificial neural networks, which forget everything instantly, RNNs remember past inputs. This makes them great for speech recognition, language translation, and even AI-generated music that doesn’t sound like a robot’s midlife crisis. Think of it this way: a basic neural net reads texts one by one, clueless about context. An RNN? It follows the whole conversation. That’s why when you type “Happy,” your phone suggests “birthday”—not “potato.”

It is important to note that artificial neural networks are way different from computer programs, so please don’t get the wrong perception from the above definition. Neural networks consist of input and output layers and at least one hidden layer. There are several kinds of Neural Networks in deep learning.

As per AILabPage, Artificial neural networks (ANNs) are “Complex computer code written with several simple, highly interconnected processing elements that is inspired by human biological brain structure for simulating human brain working and processing data (Information) models”.

- Multi-Layer Perceptron

- Radial Basis Network

- Recurrent Neural Networks

- Generative Adversarial Networks

- Convolutional Neural Networks.

Training neural networks isn’t just hard—it’s an unpredictable rollercoaster of complexity, frustration, and occasional eureka moments. Every data scientist knows this struggle like a chef battling a stubborn soufflé. And at the heart of this chaos? Weights.

In neural networks, weights don’t just sit there like passive numbers; they’re deeply tangled with hidden layers, influencing every single computation, like a puppeteer pulling a thousand invisible strings. Get them right, and your model shines. Get them wrong, and, well… welcome to the abyss of vanishing gradients! The process of training a neural network boils down to three crucial steps—each as vital as the next, and each demanding patience, precision, and a touch of insanity. Let’s dive in.

- Forward pass and makes a prediction.

- Compare prediction to the ground truth using a loss function.

- Error value to do backpropagation

The algorithm to train ANNs depends on two basic concepts: first, reducing the sum squared error to an acceptable value, and second, having reliable data to train the network under supervision.

Recurrent Neural Networks- Outlook

Recurrent neural networks are not too old neural networks, they were developed in the 1980s. One of the biggest uniquenesses RNNs have is their “UAP” (universal approximation property), so they can approximate virtually any dynamical system.

This unique property forces us to say that recurrent neural networks have something magical about them.

- RNNs takes input as time series and provide an output as time series,

- They have at least one connection cycle.

There is a strong perception of the recurrent neural network training part. The training is assumed to be super complex, difficult, expensive, and time-consuming.

As a matter of fact, after a few hands-on experiences in our lab, our response is just the opposite. So common wisdom is completely opposite from reality. The robustness and scalability of RNNs are super exciting compared to traditional neural networks and even convolutional neural networks.

Recurrent Neural Networks are way more special as compared to other neural networks. Non-RNN APIs have too many constraints and limitations (sometimes RNNs also do the same, though). Non-RNN API take

- Input – Fixed size vector: For example an “image” or a “character”

- Output – Fixed size vector: Probability matrix

- Size of Neuron – Fixed number of layers / computational steps

We need to answer “What kind of problems can be solved with “Recurrent Neural Networks”? before we go any deeper in this.

Understanding the Need for Sequential Data Processing

When we think about human intelligence, one of its most fundamental aspects is understanding sequences—whether it’s comprehending spoken language, reading a book, recognizing patterns in stock markets, or even predicting the next note in a melody. Traditional neural networks, despite their ability to learn complex relationships, lack a natural mechanism to handle sequential dependencies.

At AILabPage, our journey into Deep Learning has always been fueled by curiosity and a relentless pursuit of practical, real-world solutions. When we first started working on temporal data problems, it quickly became clear that standard feedforward neural networks (FNNs) were not sufficient for tasks where context matters. Imagine trying to predict the next word in a sentence without considering previous words—it’s like playing chess without remembering past moves!

Sequential data—where each element is dependent on past inputs—demands a specialized approach. In mathematical terms, if we define input data as a sequence: X = {x1,x2,…,x3}.

then our prediction at any timestep t should be influenced not just by xtx_txt, but also by all preceding inputs {x1,x2,…,xt−1}. This is where Recurrent Neural Networks (RNNs) shine.

How RNNs Differ from Traditional Neural Networks

Unlike traditional Feedforward Neural Networks (FNNs), which process data in a one-time, static manner, Recurrent Neural Networks (RNNs) introduce the concept of memory—allowing them to retain past information and use it for future predictions.

Key Difference: Memory & Loops

In a traditional neural network, information moves in a single, straightforward path—from input through hidden layers to the final output—without the ability to look back or retain past knowledge. There’s no built-in mechanism for feedback or memory retention, making each input independent of previous ones. Mathematically, a Feedforward Neural Network (FNN) operates as:

Y = f(WX + b)

Here, W represents the weight matrix, X is the input, and b is the bias term. However, unlike FNNs, a Recurrent Neural Network (RNN) introduces a feedback loop, allowing past states to actively shape and influence the current prediction. This memory-driven approach makes RNNs more adaptive to sequential patterns and contextual understanding.

ht = f(Wxt+Uht−1 + b)

Here, hₜ represents the hidden state at time step t, which retains information from previous time steps through the weight matrix U. This ability to remember and leverage past data empowers Recurrent Neural Networks (RNNs) to effectively capture temporal dependencies, making them exceptionally suited for tasks involving sequences, patterns, and contextual learning.

| Feature | Feedforward Neural Network (FNN) | Recurrent Neural Network (RNN) | Diagram Reference |

|---|---|---|---|

| Information Flow | One-way (input → output) | Loops & feedback connections | FNN → Math 🔗 RNN → Math |

| Mathematical Flow | Single direction flow | Loops & memory | FNN → Math 🔗 RNN → Math |

| Memory & Feedback | No feedback, no memory | Retains past states | FNN → Memory 🔗 RNN → Memory |

| Mathematical Formula | Y=f(WX+b)Y = f(WX + b)Y=f(WX+b) | ht=f(WXt+Uht−1+b)h_t = f(WX_t + Uh_{t-1} + b)ht=f(WXt+Uht−1+b) | Diagram Notes on FNN & RNN |

| Analogy | Student memorizing answers (memorizes answers) | Detective solving a case (uses past clues) | FNN → Analogy 🔗 RNN → Analogy |

| Best For | Independent tasks | Sequential tasks | Implicit from Memory Node |

| Stock Prediction | Random-like guesses | Learns market trends (market trend awareness) | FNN → StockPrediction 🔗 RNN → StockPrediction |

Think of it like this:

- A Feedforward Neural Network is like a student memorizing answers without context.

- A Recurrent Neural Network is like a detective piecing together clues from past evidence to solve a case.

At AILabPage, we put this to the test with stock price predictions, where the next day’s price is shaped by past trends. When using Feedforward Neural Networks (FNNs)—which lack memory—the predictions were no better than random guesses. However, with Recurrent Neural Networks (RNNs), patterns began to surface. The model started to “remember” market movements, recognizing trends and making more informed predictions. This demonstrated the true power of memory in AI-driven forecasting!

Real-World Applications of RNNs

Recurrent Neural Networks (RNNs) aren’t just fancy math—they’re the secret sauce behind some of the coolest AI applications out there. These bad boys thrive on sequential data, making them the go-to choice for anything that unfolds over time, like a gripping Netflix series or your unpredictable stock portfolio.

| # | Application | Description |

|---|---|---|

| 1. | Natural Language Processing (NLP) & Chatbots | – RNN-based architectures process words sequentially to understand intent. – Example: Predicting the next word in a sentence: P(“morning” ∣ “Good”) > P(“banana” ∣ “Good”) – The network learns language structures over time. |

| 2. | Speech Recognition & Audio Processing | – Humans process words as a sequence, not in isolation. – Powers technologies like Google Voice, Alexa, and real-time transcription services. – Example: At AILabPage, we trained an RNN to recognize spoken digits, mapping audio waveforms to numerical outputs. |

| 3. | Financial Time-Series Prediction | – Stock prices, cryptocurrency trends, and sales forecasting depend on past data. – Traditional ML models treat each price as independent, while RNNs capture sequential patterns. – Example: We applied an LSTM (a type of RNN) to predict currency exchange rates, significantly outperforming static models. |

| 4. | Anomaly Detection in IoT & Cybersecurity | – Detecting fraud, cyber threats, and system failures requires monitoring events over time. – RNNs learn normal behavior and detect anomalies based on sequential deviations. |

RNNs aren’t just another deep learning technique—they are the backbone of sequential intelligence. Whether you’re dealing with text, audio, finance, or IoT data, if the order of data matters, RNNs (and their advanced versions like LSTMs and GRUs) offer unparalleled learning power.

At AILabPage, we embrace a learn-by-doing philosophy, where every experiment contributes to a deeper understanding. The journey of RNNs is like life itself—constantly learning from the past to make better decisions in the future.

Fundamentals of Recurrent Neural Networks (RNNs)

At AILabPage, we’ve spent countless hours experimenting with AI architectures, and one thing became crystal clear—not all data is independent. When processing sequential data (like time-series, speech, or language), traditional neural networks fall short because they forget past inputs. That’s where Recurrent Neural Networks (RNNs) shine!

Unlike standard neural networks that treat each input as independent, RNNs remember past information through hidden states. Think of it like a human brain—when reading a sentence, you don’t process each word in isolation; you retain context to understand meaning.

The Concept of Memory in Neural Networks

Memory is the quintessential feature that sets RNNs apart. Traditional feedforward networks process inputs in a linear fashion—left to right, one at a time. RNNs, on the other hand, loop back information from previous time steps, creating a memory effect.

🔹 Mathematical Insight:

At any given time step t, the hidden state h is computed as:

ht = f(Whht−1 + WxXt)

where:

- ht is the current hidden state,

- Wh is the weight applied to the previous hidden state,

- Xt is the input at time t,

- f is the activation function (typically tanh or ReLU).

This recursive process allows past information to influence future predictions—a game-changer for sequential tasks!

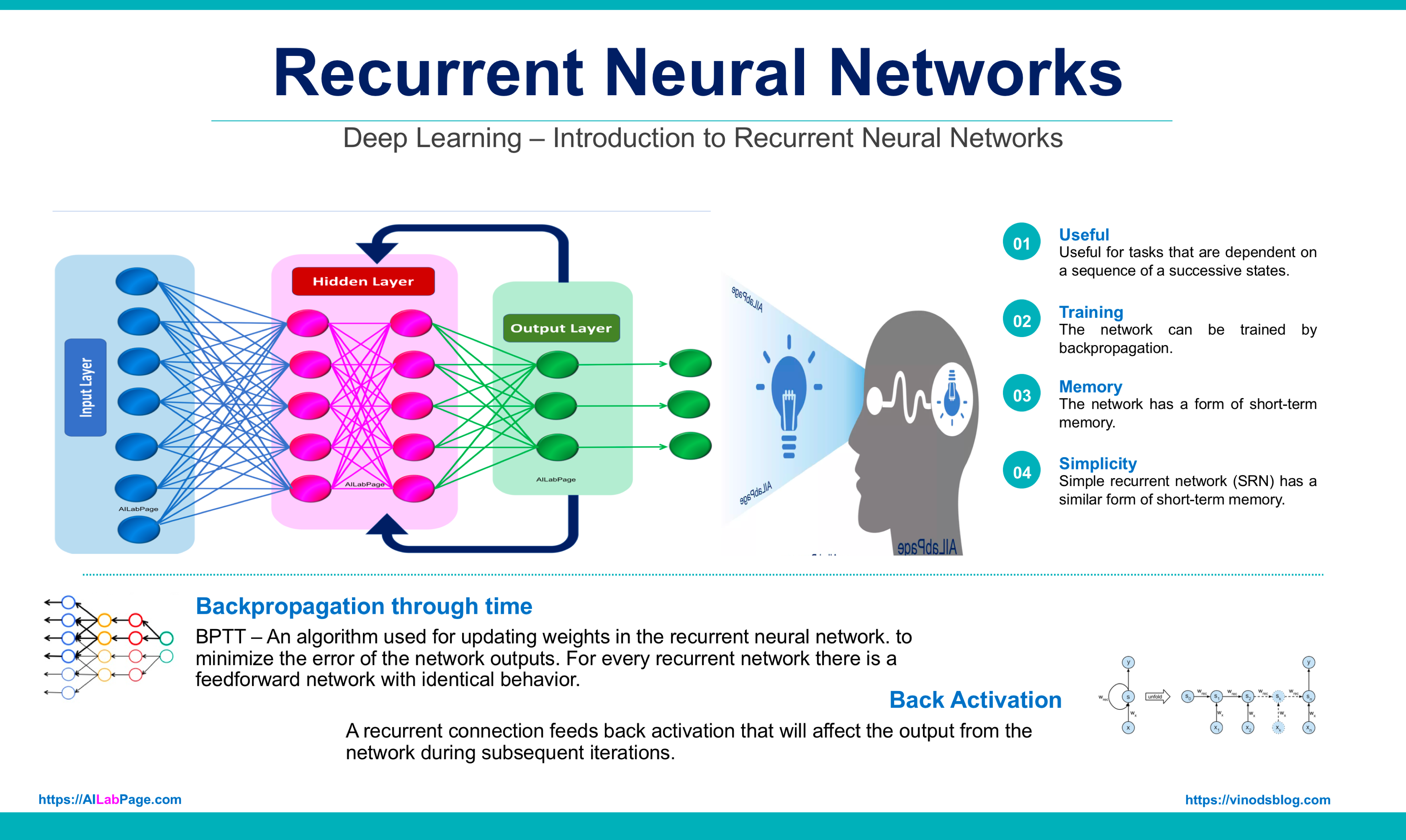

Structure and Architecture of an RNN

An RNN is fundamentally different from a traditional neural network. Instead of a simple input → hidden → output structure, RNNs introduce loops that allow information to persist. Here’s how it works:

- The network receives an input Xt at time step t.

- The hidden state ht updates using past information.

- The output is computed and passed forward while the hidden state is retained for the next time step.

💡 Example from AILabPage:

We trained an RNN to predict daily temperature trends. Instead of relying solely on the previous day’s temperature, it considered multiple past days to forecast future values accurately. This sequential dependency was impossible for traditional ML models to capture effectively.

Forward and Backward Pass in RNNs

Understanding how RNNs learn is key to grasping their power. Training an RNN involves:

- Forward Pass:

- Input Xt flows through the network.

- The hidden state ht stores past information.

- The network computes an output.

2. Backward Pass (Backpropagation Through Time – BPTT):

- Errors are propagated backward through time, meaning each time step’s error affects previous time steps.

- We apply gradient descent to update weights.

The gradient of the loss with respect to weights is calculated using the chain rule, leading to updates in earlier time steps—this is why it’s called Backpropagation Through Time (BPTT).concerning

- Mathematical Insight:

The loss function L is computed as

L = T∑t=1 Lt

Challenge: RNNs suffer from vanishing gradients, where earlier layers receive almost no meaningful updates. That’s why we turn to LSTMs (Long Short-Term Memory) and GRUs (Gated Recurrent Units) to fix this!

RNNs in Code: A Minimal PyTorch Walkthrough — Saolix Learning Lab

I have always believed that a model you can’t run is a model you don’t understand. Equations are useful. But typing them into PyTorch and watching them learn is what makes the concept stick.

Here’s the smallest amount of code that shows the full picture: build an RNN, do a forward pass, train it on a tiny task, and watch it generate. This is part of the **Saolix Learning Lab** series — tutorials by Saolix Technologies published on here on vinodsblog . Free to use, free to modify, free to share, for both educational and commercial purposes. Just keep the header so other learners know where it came from.

Run this in any Jupyter notebook or Python file. PyTorch is the only dependency.

Step 1 — The forward pass, in a few lines

# ============================================================

# Saolix Learning Lab — RNN Forward Pass (PyTorch)

# Author: Vinod Sharma

# Brand: Saolix Technologies

# Blog: https://vinodsblog.com (full tutorial series)

# Company: https://saolix.com (hardware, software, consulting)

# License: Free to use, modify, and share for educational and

# commercial purposes. Attribution appreciated, not

# required. If you build something interesting with

# this code, contact info@saolix.com — we would love

# to see it.

# ============================================================

import torch

import torch.nn as nn

# Saolix RNN config — a small recurrent network for teaching

saolix_rnn = nn.RNN(

input_size=10,

hidden_size=20,

num_layers=2,

batch_first=True,

)

# Saolix sample input: 5 sequences, each 3 time steps, each step a 10-dim vector

saolix_input = torch.randn(5, 3, 10)

# Forward pass returns (output_at_every_step, final_hidden_state)

saolix_output, saolix_hidden = saolix_rnn(saolix_input)

print("Saolix RNN output shape:", saolix_output.shape) # torch.Size([5, 3, 20])

print("Saolix RNN hidden shape:", saolix_hidden.shape) # torch.Size([2, 5, 20])That’s it. That’s a working RNN. A few lines define it, one line runs it. The shapes tell you everything: an output for every time step, and a final hidden state for each layer.

Step 2 — A tiny character-level language model

Now let’s actually train one. We’ll teach a small Saolix RNN to predict the next character in a short string. Same architecture as bigger language models — just shrunk so it fits on one page.

# ============================================================# Saolix Learning Lab — Character-Level RNN Trainer (PyTorch)# Author: Vinod Sharma# Brand: Saolix Technologies# Blog: https://vinodsblog.com (full tutorial series)# Company: https://saolix.com (hardware, software, consulting)# License: Free to use, modify, and share. Educational and# commercial use both permitted. Keep this header so# fellow learners can find the rest of the Saolix# series. Contact info@saolix.com for partnerships.# ============================================================import torchimport torch.nn as nn# Saolix toy corpus — small enough to train on a laptop in secondssaolix_text = "hello from saolix recurrent neural networks"# Build the Saolix vocabularysaolix_chars = sorted(set(saolix_text))saolix_ch_to_ix = {c: i for i, c in enumerate(saolix_chars)}saolix_ix_to_ch = {i: c for i, c in enumerate(saolix_chars)}saolix_vocab_size = len(saolix_chars)def saolix_one_hot(ix): """Saolix helper — one-hot encode a single character index.""" v = torch.zeros(saolix_vocab_size) v[ix] = 1.0 return v# Saolix training pairs: each character predicts the next onesaolix_inputs = torch.stack( [saolix_one_hot(saolix_ch_to_ix[c]) for c in saolix_text[:-1]]).unsqueeze(0)saolix_targets = torch.tensor([saolix_ch_to_ix[c] for c in saolix_text[1:]])class SaolixCharRNN(nn.Module): """Saolix Learning Lab — minimal character-level RNN.""" def __init__(self, vocab_size, hidden_size=32): super().__init__() self.saolix_rnn = nn.RNN(vocab_size, hidden_size, batch_first=True) self.saolix_fc = nn.Linear(hidden_size, vocab_size) def forward(self, x, h=None): out, h = self.saolix_rnn(x, h) return self.saolix_fc(out), h# Saolix training setupsaolix_model = SaolixCharRNN(saolix_vocab_size)saolix_loss_fn = nn.CrossEntropyLoss()saolix_optim = torch.optim.Adam(saolix_model.parameters(), lr=0.01)# Saolix training loop — 200 steps on the toy stringfor saolix_step in range(200): saolix_optim.zero_grad() saolix_logits, _ = saolix_model(saolix_inputs) saolix_loss = saolix_loss_fn(saolix_logits.squeeze(0), saolix_targets) saolix_loss.backward() saolix_optim.step() if saolix_step % 50 == 0: print(f"Saolix step {saolix_step:3d} | loss = {saolix_loss.item():.4f}")def saolix_generate(start_char, n=30): """Saolix sampler — greedy character-by-character generation.""" h = None ix = saolix_ch_to_ix[start_char] out = start_char for _ in range(n): x = saolix_one_hot(ix).view(1, 1, -1) saolix_logits, h = saolix_model(x, h) ix = int(torch.argmax(saolix_logits.squeeze())) out += saolix_ix_to_ch[ix] return out# Saolix sample runprint("Saolix RNN generated:", saolix_generate("h"))# Output: 'hello from saolix recurrent neur...'200 steps of training, on a single string, on your laptop. By the end, the Saolix network has learned the pattern well enough to reproduce the sentence character by character.

Step 3 — What this tiny Saolix example actually teaches you

A few things to notice, because they generalise to much bigger models:

– A Saolix RNN is just `nn.RNN` underneath — one line of model definition.

– The forward pass takes a sequence and returns one output per time step plus a final hidden state. This is what makes recurrence different from a feedforward network.

– Training is identical to any other PyTorch model: forward pass, compute loss, backward pass, optimiser step. The Saolix wrapper is just a teaching shell — production code follows the same shape.

– The same pattern scales. Replace `nn.RNN` with `nn.LSTM` or `nn.GRU` and the rest of the code does not change. Almost everything you see in production NLP code looks like this, just with longer sequences, bigger hidden sizes, and proper batching.

Step 4 — When you would reach for LSTM, GRU, or Transformer instead

For sequences longer than about 10 steps, the vanilla Saolix RNN starts struggling with vanishing gradients. We will cover that in the next sections. The fix at the time was LSTM and GRU. The fix now is usually a Transformer.

If this code runs on your machine, you have done the hard part. The rest of the post is about understanding why the simple version stops being enough, and what came next.

**Saolix License Note:** All code in the Saolix Learning Lab series is free to use, modify, and share for both educational and commercial purposes. No attribution required, though a link back to vinodsblog helps other learners find the rest of the series. If you build something interesting with it, write to us at info@saolix.com or visit Saolix Technologies — we would love to see what you make.

Supervised or Unsupervised

RNNs are versatile models applicable to both supervised and unsupervised learning. In supervised tasks, they learn from labeled input-output pairs to make predictions or classifications. In unsupervised tasks, they discover patterns or generate sequences without explicit labels. Their adaptability depends on the problem type and data structure.

| Learning Type | Description | Examples | Techniques/Features |

|---|---|---|---|

| Supervised Learning | RNNs are trained with labeled data, where input-output pairs are explicitly provided. The goal is to map input sequences to output sequences or discrete labels. | – Sequence Prediction: Time series forecasting, language modeling, speech recognition – Sequence Classification: Sentiment analysis, named entity recognition | – Minimizing loss functions through optimization algorithms like Adam or SGD – Backpropagation Through Time (BPTT): Adapts weights over time steps – Use of advanced cells such as LSTM (handles long-term dependencies) or GRU (simpler and computationally efficient) to overcome the vanishing gradient problem inherent in RNNs. |

| Unsupervised Learning | RNNs are applied to unlabeled data, learning patterns, or generating sequences without explicit supervision. The focus is on discovering structures and relationships in the data. | – Sequence Generation: Generating text, audio, or videos – Sequence-to-Sequence Learning: Machine translation, text summarization – Anomaly Detection: Identifying irregular patterns in financial transactions, network activity, or sensor data | – Uses techniques like unsupervised pretraining followed by fine-tuning – Models like autoencoders or variational autoencoders (VAEs) for sequence generation – Identifies deviations using probabilistic measures or reconstruction errors – Leverages attention mechanisms in sequence-to-sequence models for better context and accuracy. |

In summary, while RNNs are commonly used in supervised learning settings where labeled data is available, they are versatile enough to be applied to a variety of unsupervised learning tasks as well.

Recurrent Neural Network- Architecture

A typical neural network comprises an input layer, one or more hidden layers, and an output layer. RNNs, tailored for sequential data, incorporate an additional feedback loop in the hidden layer known as the temporal loop. This loop allows the network to retain information from previous inputs, enabling it to process sequential data effectively. The types of RNN architectures include

- One to One: Basic RNNs supporting a single input and output, akin to conventional neural networks.

- One to Many: Featuring one input and multiple outputs, useful for tasks like image description.

- Many to One: Involving multiple inputs and a single output, suitable for sentiment analysis.

- Many to Many: Supporting multiple inputs and outputs, ideal for tasks like language translation, requiring sequence retention for accuracy.

:

| Step | From → To | Description |

|---|---|---|

| 1 | User → Input Sequence | Provide Input Sequence |

| 2 | Input Sequence → Embedding Layer | Pass Input Sequence |

| 3 | Embedding Layer → Word Embedding | Generate Word Embeddings |

| 4 | Embedding Layer → Positional Encoding | Add Positional Encoding |

| 5 | Embedding Layer → RNN Cell | Pass Embedded Input |

| 6 | RNN Cell → Hidden State | Compute Hidden State |

| 7 | RNN Cell → Weight Matrix (W) | Apply Weight Matrix |

| 8 | RNN Cell → Bias (b) | Add Bias |

| 9 | RNN Cell → Activation Function | Apply Activation Function |

| 10 | Activation Function → Tanh | Apply Tanh |

| 11 | Activation Function → ReLU | Apply ReLU |

| 12 | Activation Function → Output Layer | Pass Activated Output |

| 13 | Output Layer → Softmax | Compute Probabilities |

| 14 | Softmax → Predicted Output | Generate Predicted Output |

| 15 | Predicted Output → Loss Calculation | Compute Loss |

| 16 | Loss Calculation → Cross Entropy | Cross Entropy Loss |

| 17 | Loss Calculation → Mean Squared Error | Mean Squared Error Loss |

| 18 | Loss Calculation → BPTT | Calculate Gradient |

| 19 | BPTT → Gradient Descent | Perform Gradient Descent |

| 20 | BPTT → Weight Update | Update Weights and Biases |

| 21 | Weight Update → RNN Cell | Update RNN Parameters |

| 22 | Predicted Output → End | Output Predicted Sequence |

From their architecture’s inherent ability to capture temporal dependencies to their applications in diverse fields like NLP and time series prediction, RNNs offer a powerful framework for analyzing dynamic data streams. Understanding the architecture of recurrent neural networks (RNNs) requires familiarity with artificial feed-forward neural networks.

Recurrent Neural Network Variants

Recurrent Neural Networks (RNNs), Recursive Neural Networks (ReNNs), Gated Recurrent Units (GRUs), and Long Short-Term Memory networks (LSTMs) can be considered as part of the broader family of neural network architectures designed for handling sequential data.

| Architecture | Key Characteristics | Purpose | Example Use Cases |

|---|---|---|---|

| RNNs | – Share recurrence characteristic – Capture temporal dependencies in sequential data | – Handle time series, language, and speech data | – Stock price prediction – Sentiment analysis – Speech-to-text |

| GRUs | – Simplified version of LSTM – Less computationally expensive – Capture sequential dependencies | – Efficient for sequential data modeling | – Predictive text generation – Weather forecasting – Speech recognition |

| LSTMs | – More complex than RNNs and GRUs – Use memory cells and gates to store and forget information – Capture long-term dependencies | – Handle long-term dependencies in sequences | – Language translation – Video captioning – Time series forecasting |

| ReNNs | – Involve recurrence – Tailored for hierarchical data structures such as trees or graphs | – Work with structured data (e.g., graphs, trees) | – Natural language parsing – Semantic analysis – Graph-based recommendation systems |

| Differences | – RNNs, GRUs, LSTMs are for sequential data – ReNNs are specifically for hierarchical data structures like trees or graphs | – ReNNs specialize in structured data, others focus on sequences | – Hierarchical data modeling – Tree/graph traversal tasks |

| Use Cases | – Time series forecasting – Speech recognition – Language modeling | – ReNNs are used in tree structures and graph data processing | – ReNNs for parsing structured data – LSTMs for long-term sequence learning |

In this sense, RNNs, GRUs, LSTMs, and ReNNs form a cohesive family of architectures within the realm of neural networks, each offering different capabilities for processing sequential or structured data. However, within this family, there are distinct variations and design choices that cater to specific data characteristics and modeling requirements.

Solving Complex Problems with Sequential Data

Recurrent Neural Networks (RNNs) are well-suited for solving a wide range of problems that involve sequential or time-series data. Some of the key problems that can be effectively addressed using RNNs include

- Natural Language Processing (NLP): RNNs are commonly used in tasks such as language translation, sentiment analysis, text generation, and speech recognition. Their ability to capture temporal dependencies in language sequences makes them valuable for understanding and generating human language.

- Time Series Analysis: RNNs are ideal for time series prediction and forecasting, enabling accurate predictions based on historical data. They can be applied to financial forecasting, stock market analysis, weather prediction, and other time-dependent data analysis tasks.

- Speech Recognition: RNNs excel in converting spoken language into written text. They can process audio data over time to recognize speech patterns and convert them into textual representations.

- Music Generation: RNNs can be used in music composition and generation tasks. By learning patterns and structures from existing music, RNNs can create new musical pieces that follow similar styles and harmonies.

- Video Analysis: RNNs can be applied to video analysis tasks, such as action recognition, object tracking, and activity forecasting. They can process sequential frames of a video to understand temporal patterns and movements.

- Natural Language Generation: RNNs can generate coherent and contextually relevant text based on given prompts or input, making them useful in chatbots, automatic summarization, and creative writing applications.

- Sentiment Analysis: RNNs can determine the sentiment of a given piece of text, classifying it as positive, negative, or neutral, which is valuable for sentiment analysis in customer feedback and social media data.

- Gesture Recognition: RNNs can recognize gestures from motion capture data, making them applicable in virtual reality and human-computer interaction systems.

These are just a few examples of the diverse problems that RNNs can effectively solve. Their ability to handle sequential data and capture temporal dependencies makes them a powerful tool for a wide range of real-world applications across various industries.

When we deal with RNNs, they show excellent and dynamic abilities to deal with various input and output types. Before we go deeper, let’s look at some real-life examples.

- Varying Inputs and Fixed Outputs: Speech, Text Recognition, and Sentiment Classification: In today’s time, this can be the biggest relief for a bomb like social media to kick out negative comments. People who like to give only negative comments for anything and everything rather than helping have one motive: to pull him/her down) someone’s efforts. Classifying tweets and Facebook comments into positive and negative sentiments becomes easy here. Inputs with varying lengths, while the output is of a fixed length.

- Fixed Inputs and Varying Outputs: AILabPage’s Image Recognition (Captioning) transcends visual comprehension barriers. Employing advanced algorithms, it interprets single-input images, producing detailed captions. From lively scenes of children riding bikes to serene park moments and dynamic sports activities like football or dancing, its output flexibility accommodates varied contexts. AILabPage’s pioneering solution redefines image processing capabilities, promising versatile applications across industries, from enhancing accessibility for visually impaired individuals to streamlining content indexing for businesses.

- Varying Inputs and Varying Outputs: Varying Inputs and Varying Outputs: AILabPage’s Machine Translation and Language Translation revolutionize cross-lingual communication. Human translation, a laborious endeavor, is surpassed by innovative algorithms. AILabPage’s solution, akin to Google’s online translation, excels in holistic text interpretation. It not only conveys words but also preserves sentiments, adapts to varied input lengths, and contextualizes meanings. This transformative capability extends to both input and output variations, promising enhanced language accessibility and seamless global communication.

As evident from the cases discussed above, Recurrent Neural Networks (RNNs) excel at mapping inputs to outputs of diverse types and lengths, showcasing their versatility and broad applicability.

The underlying foundation of RNNs lies in their ability to handle sequential data, making them inherently suitable for tasks involving time series, natural language processing, audio analysis, and more. The generalized nature of RNNs allows them to adapt and learn from temporal dependencies in data, enabling them to tackle a wide range of problems and deliver meaningful insights in various domains.

Their capacity to capture context and temporal relationships in sequential data makes RNNs a valuable tool for addressing real-world challenges, where the length and complexity of input-output mappings may vary considerably. By leveraging this inherent flexibility, developers and researchers can employ RNNs as a fundamental building block for constructing innovative and sophisticated models tailored to their specific data-driven needs.

Recurrent Neural Networks & Sequence Data

As we know by now, RNNs are considered to be fairly good for modeling sequence data. Let’s understand sequential data a bit. While playing cricket, we predict and run in the direction where the ball moves. This means recurrent networks take current input examples they see and also what they have perceived previously in time. This happens without any guessing or calculation because our brain is programmed so well that we don’t even realize why we run in a ball’s direction.

If we look at the recording of ball movement later, we will have enough data to understand and match our action. So this is a sequence—a particular order in which one thing follows another. With this information, we can now see that the ball is moving to the right. Sequence data can be obtained from

- Audio files: This is considered a natural sequence. Audio file clips can be broken down in the audio spectrogram and fed into RNNs.

- Text file: Text is another form of sequence; text data can be broken into characters or words (remember search engines guessing your next word or character).

Can we comfortably say that RNNs are good at processing sequence data for predictions based on our examples above? RNNs are gaining more attraction and popularity for one core reason: they allow us to operate over sequences of vectors for input and output, not just fixed-size vectors. On the downside, RNNs suffer from short-term memory.

Use cases – Recurrent Neural Networks

Let’s understand some of the use cases for recurrent neural networks. There are numerous exciting applications that got a lot easier, more advanced, and more fun-filled because of RNNs. Some of them are listed below.

- Music synthesis

- Speech, text recognition & sentiment classification

- Image recognition (captioning)

- Machine Translation – Language translation

- Chatbots & NLP

- Stock predictions

To comprehend the intricacies of constructing and training Recurrent Neural Networks (RNNs), including widely utilized variations like Gated Recurrent Units (GRUs) and Long Short-Term Memory (LSTM) networks, it is essential to delve into the fundamental principles and underlying mechanisms of these sophisticated architectures.

Mastering the art of RNNs entails grasping the concept of sequential data processing, understanding the role of recurrent connections in retaining temporal information, and exploring the challenges posed by vanishing or exploding gradients.

| Topic | Details | AILabPage Perspective |

|---|---|---|

| Empowering Innovation with RNNs | RNNs help solve real-world challenges like natural language processing (NLP), speech recognition, and time series analysis by leveraging sequential data. | We emphasize practical learning to help developers and researchers master these techniques for real-world AI applications. |

| Learning Opportunities | Many free and paid courses are available online for learning RNNs. | At AILabPage, we provide hands-on classroom training in our labs, ensuring deep learning enthusiasts gain real implementation experience. |

| Applications & Project Building | RNNs are widely used for text synthesis, machine translation, and predictive modeling. | Our training programs focus on industry-relevant projects, allowing learners to build cutting-edge deep learning applications. |

| Variations of RNNs | Advanced architectures like LSTMs (Long Short-Term Memory), GRUs (Gated Recurrent Units), and Bidirectional RNNs improve memory retention and predictive accuracy. | We guide learners through practical experiments and real-world datasets, ensuring a comprehensive understanding of RNNs and their applications. |

These sequence algorithms have significantly simplified the process of constructing models for natural language, audio files, and other types of sequential data, making them more accessible and effective in various applications.

Vanishing and Exploding Gradient Problem

The deep neural network has a major issue around gradients as it is very unstable. Due to its unstable nature, it tends to either explode or vanish from earlier layers quickly.

The vanishing gradient problem emerged in the 1990s as a major obstacle to RNNs’ performance. In this problem, adjusting weights to decrease errors and the “synch problem” lead the network to cease to learn at the very early stage itself.

The problem encountered by Recurrent Neural Networks (RNNs) had a significant impact on their popularity and usability. This issue arises from the vanishing or exploding gradients phenomenon, which occurs when the RNN attempts to retain information from previous time steps. The nature of RNNs to maintain a memory of past values can lead to confusion, causing the current values to either skyrocket or plummet uncontrollably, overpowering the learning algorithm.

As a result, an undesirable situation of indefinite loops arises, disrupting the network’s ability to make further progress and effectively bringing the entire learning process to a standstill. This challenge posed significant obstacles to the practical application and widespread adoption of RNNs, prompting researchers and developers to seek alternative architectures and techniques, such as LSTMs (Long Short-Term Memory) and GRUs (Gated Recurrent Units), which have proven more effective in addressing the vanishing and exploding gradient issues while preserving the temporal dependencies in sequential data.

For example, neurons might get stuck in a loop where they keep multiplying the previous number by a new number, which can go to infinity if all numbers are more than one or get stuck at zero if any number is zero. And it depends on how much time you have. For us at AILabPage, we say machine learning is a crystal-clear and simple task. It is not only for PhD aspirants; it’s for you, us, and everyone.

Backpropagation in a Recurrent Neural Network (BPTT)

In the ever-evolving landscape of machine learning, Backpropagation Through Time (BPTT) stands out as a crucial technique for training Recurrent Neural Networks (RNNs). From my experience as a technology leader, I’ve seen firsthand how mastering BPTT can unlock new levels of performance in models dealing with sequential data.

Understanding BPTT

BPTT extends the classic backpropagation algorithm into the realm of time series data by “unfolding” the RNN across its time steps. This unfolding enables the calculation of gradients over multiple time steps, which is essential for learning long-term dependencies in sequences.

Key Phases of BPTT:

- Forward Pass: In this phase, the RNN processes the input sequence, updating its hidden states and generating outputs. Each step in the sequence affects the subsequent hidden states, making it crucial to accurately capture these dependencies.

- Backward Pass: Here, BPTT calculates gradients by traversing back through the unfolded time steps. This process involves computing the gradients of the loss function concerning the network’s weights and accumulating these gradients to update the weights effectively.

- Challenges and Solutions: While BPTT is powerful, it’s not without its challenges. The vanishing and exploding gradient problems can significantly impact the training process. Solutions such as gradient clipping and advanced optimization techniques help mitigate these issues, ensuring stable and effective learning.

BPTT is a foundational technique in training RNNs, enabling these networks to learn complex, sequential dependencies. My experience highlights the importance of understanding both the power and the limitations of BPTT. As technology continues to advance, mastering these techniques and their associated challenges will be crucial for driving innovation and achieving excellence in machine learning applications.

GRUs and RNNs

Gated Recurrent Units and variant of the Recurrent Neural Network (RNN) architecture designed to overcome the challenges posed by the vanishing gradient problem.

The GRU model demonstrates commensurate performance with the LSTM model, notwithstanding its less intricate architecture. The Gated Recurrent Unit (GRU) functions via the integration of the memorization and hidden state components into a concatenated vector, effectively eliminating the requirement for separate memory cells. The GRUs offer two distinct gates, specifically the update gate and the reset gate.

By now we know the GRU is a unique neural network architecture that differs from conventional RNNs, aiming to understand the intricate and long-term connections within sequential data while maintaining a simpler structure as compared to the LSTM.

- Hidden State: The idea of a hidden state is related to the outcome generated by the GRU component.

- It signifies how data is shared with upcoming time phases or interconnected layers in the neural network.

- The main factor that affects things is the way in which the original information combines with the changes in memory, which is determined by the update gate.

- Current Memory State: The current condition of memory relates to the knowledge that has been remembered and conserved in the current moment.

- The present iteration of the text is dependent upon the employment of the reset gate, which serves the purpose of regulating the relevance of past recollections, and the update gate.

- This is responsible for supervising the quantity of new information that is meant to be assimilated.

Gated Recurrent Units (GRUs) have gained significant recognition for their efficacy in multiple natural language processing (NLP) undertakings, including but not limited to machine translation, sentiment analysis, and text generation.

The simplified structure of the GRUs renders it computationally less expensive than LSTM. The decision to select either long-short-term memory (LSTM) or gated recurrent units (GRU) hinges upon the particular task, dataset, and computational resources that are at one’s disposal, as each architecture may prove to be efficacious in distinct circumstances.

LSTM and RNNs

Long Short-Term Memory – LSTM, is an optimized RNN for gradient issues. RNNs can model sequences thanks to cyclic connections, unlike feedforward neural networks. Models successful at sequence labeling and prediction. Despite being widely used, RNNs are underutilized in speech recognition, mainly for minor phone recognition tasks. New RNN architectures use LSTM to improve speech recognition training for large lexicons.

Long Short-Term Memory Networks are the heart of many modern deep learning applications, especially when it comes to tasks involving sequential data.

LSTM is a popular technique used in various disciplines, such as sentiment analysis, language generation, speech recognition, and video analysis. The system includes memory units and mechanisms to determine important information for long-term storage. LSTMs includes a distinctive design methodology used for recurrent neural networks (RNNs) with the objective of surmounting the limitations of conventional RNNs in identifying complex patterns within sequential data.

By working together in harmony, the individual parts come together to enhance the Long Short-Term Memory (LSTM) design’s ability to learn and retain information over long periods of time. This ultimately results in its capability to effectively handle and integrate sequential data with consistent connections. The architecture of LSTM has special memory cells and gating mechanisms that enable the model to capture and retain long-term dependencies. LSTM components and functions breakdown:

| Component | Function | Description |

|---|---|---|

| Memory Cell | Stores Long-Term Information | The core of the LSTM unit, responsible for maintaining and updating data over long periods. Enables long-term retention of information. |

| Input Gate | Controls Data Retention | Decides how much new information should be added to the memory cell, based on the current input and previous state. |

| Forget Gate | Removes Unnecessary Data | Evaluates stored information and discards irrelevant details by considering the current input and prior hidden state. |

| Output Gate | Manages Information Flow | Regulates the release of data from the memory cell to the next time step or layer, based on the present input and previous hidden state. |

| Cell State | Internal Memory | Represents the memory content of the LSTM, interacting with gates to retain or remove information over time. |

| Hidden State | Final Processed Output | The output of the LSTM at a given time step, carrying refined information to the next layer or time step. Influenced by the cell state and output gate. |

The LSTM technique in RNNs aims to tackle the issue of gradient vanishing through the use of input, forget, and output gates that regulate the information flow via gating mechanisms. Leveraging advanced memory cells and gating mechanisms, LSTM networks exhibit outstanding aptitude for carrying out diverse sequential assignments, encompassing, but not limited to, functions such as language modeling, speech recognition, and time series forecasting.

Sequential Memory

Sequential memory refers to the ability of a model to retain information across a sequence of events or time steps. This concept is crucial in fields like natural language processing, speech recognition, and time-series prediction where context over time is essential.

| Memory Type | Description | Use Cases | Example Architectures |

|---|---|---|---|

| Short-Term Memory | Retains only recent information, limited in capturing long-term dependencies. | – Real-time applications – Immediate context processing | – RNNs – Vanilla Neural Networks |

| Long-Term Memory | Retains information for a longer duration, crucial for capturing long-term dependencies. | – Long-term prediction tasks – Complex decision making | – LSTMs – GRUs |

| Working Memory | Temporarily stores intermediate information to manipulate or use later in computations. | – Cognitive tasks – Problem-solving systems | – Hybrid models combining memory mechanisms |

| Episodic Memory | Recalls specific past events or episodes with context for improved decision-making. | – Personalization systems – Contextual search | – Memory Networks – Neural Turing Machines |

| Semantic Memory | Stores general knowledge and concepts, independent of context. | – Knowledge graphs – Question answering systems | – Transformer-based models – BERT, GPT |

| External Memory | Uses external storage to augment internal memory, allowing access to vast information beyond the model’s capacity. | – Large-scale data processing – Complex reasoning tasks | – Neural Turing Machines – Memory-augmented Networks |

Sequential memory plays a critical role in AI models, allowing them to remember past information over time, which enhances their ability to make informed decisions. Various architectures like LSTMs and memory networks leverage different memory types for specialized tasks.

RNN vs LSTM vs Transformer: When to Use What

The honest answer to “should I use an RNN?” is: probably not, unless you have a specific reason. Transformers have eaten most of what RNNs used to do. But “probably not” is not the same as “never”. Here is the table I wish someone had handed me when I was first learning this.

So when would I actually pick each one?

**Pick a Transformer when:**

- You are doing anything involving language, code, or long context windows. Almost every NLP task starts here.

- You have access to pre-trained models. The advantage is enormous — you almost never train from scratch.

- You can afford the memory and compute. They are the most expensive of the three.

**Pick an LSTM or GRU when:**

– You are streaming data in real time and cannot afford the memory of attention.

- You have a small dataset. Transformers tend to overfit small data; LSTMs handle it more gracefully.

- Working on edge or mobile devices, where every megabyte of model size matters.

- You are doing time-series forecasting, especially with strong local-time structure (sensor data, finance, control systems).

**Pick a vanilla RNN when:**

- You are learning the concept. Honestly, this is the main use case beyond now.

- Sequences are very short (under 10 steps) and the simplicity is worth it.

- You want minimum compute for maximum interpretability of the recurrence.

A pragmatic rule I follow

When someone in my team is starting an NLP project, I tell them: **default to a small Transformer. Reach for an LSTM only when you have measured a specific reason that the Transformer doesn’t work.** That reason might be latency, memory, dataset size, or interpretability. If it’s just “RNNs feel more elegant”, that is not a reason to ship something slower.

The flip side is also true. If your problem is genuinely sequential, low-latency, and runs on a phone, an LSTM might be the right tool. The point is to choose deliberately, not by fashion.

Eight Things People Get Wrong About RNNs

I have been teaching this material for years, and the same wrong intuitions show up again and again — in students, in interviewers, in people who should know better. Here are the ones worth sorting out early.

- RNNs have memory.

Sort of, but not really — and the difference matters. A vanilla RNN’s hidden state carries information from the previous step into the next. That is not memory in the human sense. It is more like a running average that gets overwritten as new inputs come in. Truly long-range dependencies are exactly what vanilla RNNs are bad at.

LSTMs were invented to give the network something closer to memory — an explicit cell state that the network can choose to write to, read from, or leave alone. That word “choose” is doing real work there.

- RNNs are obsolete.

Mostly true, with caveats. Transformers have replaced RNNs for almost every NLP task. But RNNs and their variants are still alive and well in: streaming speech recognition, time-series forecasting on small datasets, edge-device inference where memory is precious, and control systems where determinism matters. “Obsolete” is too strong. “Niche” is more accurate.

- LSTMs and GRUs are completely different from RNNs.

Wrong. Both are types of RNN. They are RNNs with extra machinery — gates that decide what to remember, what to forget, what to output. The recurrence is still there. The “RNN” in LSTM and GRU is the same RNN you started with, just with better plumbing.

- Backpropagation through time is a different algorithm.

Wrong. BPTT is just regular backpropagation, applied to the unrolled version of the network. If you unroll an RNN across 5 time steps, you get a 5-deep feedforward network with shared weights. Standard backprop runs through it. The “through time” part is a name, not a different algorithm.

- More layers always help.

Wrong, and this one bites people. Stacking more RNN layers can help up to a point, then it usually hurts. Vanishing gradients across depth, on top of vanishing gradients across time, is a hard combination. Most production RNN systems use 2-4 layers, not 20. If you find yourself wanting 10 layers of RNN, you probably want a Transformer instead.

- RNNs can’t handle variable-length input.

Wrong. RNNs are one of the few architectures that handle variable-length input natively. You can feed an RNN a 5-token sequence and a 500-token sequence with the same model, no padding tricks required. This was a big part of why they dominated NLP for so long. Transformers can also handle this, but with more bookkeeping (positional encodings, attention masks).

- The vanishing gradient problem is solved.

Mostly true, but with an asterisk. LSTMs, GRUs, careful weight initialisation (Glorot, He), gradient clipping, and layer normalisation together make the problem manageable in most practical settings. But it is not “solved” in any clean theoretical sense — it is “engineered around”. For very long sequences (thousands of tokens), the residual connections and attention in Transformers handle this much more gracefully than any RNN variant.

- If I understand RNNs, I can skip Transformers.

Wrong, and this one matters for your career. Understanding RNNs gives you intuition about sequence processing, hidden states, and gradient flow. That intuition transfers. But Transformers introduce ideas — self-attention, positional encoding, parallel sequence processing — that RNNs do not prepare you for. If you are serious about ML in 2026, RNNs are the warm-up. Transformers are the main event.

If any of these surprised you, good. The wrong intuitions are not stupid — they are sensible-looking guesses that turn out to be incomplete. Naming them is the first step to outgrowing them.

Test Yourself: Five RNN Questions

Try these before reading the answers. Calibrated for someone who has just finished this post — you should be able to get most of them with the material above.

**Question 1.** Why does an RNN need to be unrolled before backpropagation?

**Question 2.** You’re processing a sequence of 1,000 time steps with a vanilla RNN. What’s the most likely thing to go wrong, and what’s the fix?

**Question 3.** An LSTM has three gates: input, forget, output. What does each gate actually do, in one sentence each?

**Question 4.** You have 10,000 training examples and want to build a sentiment classifier. RNN, LSTM, or fine-tuned Transformer — which do you pick, and why?

**Question 5.** Why can a Transformer process a sequence in parallel on GPU but a vanilla RNN cannot?

Answers (try the questions first)

**Answer 1.** Backpropagation requires a directed acyclic graph of operations. An RNN, in its compact form, has a loop — the hidden state feeds back into itself. Unrolling the RNN across time steps converts the loop into a deep feedforward network with shared weights, which is acyclic and can be backpropagated through. The “through time” in BPTT just means we propagate gradients across the unrolled time steps as well as across layers.

**Answer 2.** Vanishing or exploding gradients. Across 1,000 time steps, the chain rule multiplies gradients many times. If those multiplications shrink the gradient (vanishing), early time steps stop learning. If they grow it (exploding), training diverges. The fix is some combination of: switch to LSTM or GRU, apply gradient clipping (e.g. clip norm to 1.0), use careful weight initialisation, or — most likely in 2026 — switch to a Transformer.

**Answer 3.**

- – **Forget gate:** decides what to discard from the cell state. “What of my old memory is no longer relevant?”

- – **Input gate:** decides what new information to write into the cell state. “What of this new input is worth remembering?”

- – **Output gate:** decides what part of the cell state to expose as the hidden state for this step. “What do I want the rest of the network to see right now?”

**Answer 4.** Fine-tuned Transformer, almost certainly. 10,000 examples is small for training a model from scratch, but plenty to fine-tune a pretrained model like DistilBERT or RoBERTa. You inherit billions of training tokens of language understanding for free. An LSTM trained from scratch on 10K examples will lose to a fine-tuned Transformer on essentially any sentiment task. The only reason to pick LSTM here would be a hard memory or latency constraint that ruled out the Transformer.

**Answer 5.** Because an RNN’s hidden state at time t depends on the hidden state at time t-1, which depends on t-2, and so on. You can’t compute step t until step t-1 is done — it’s sequential by definition. A Transformer, by contrast, computes attention between all positions at once. There is no temporal dependency in the forward pass; positional encodings carry the order information explicitly. This is why Transformers train so much faster on modern GPUs — the entire sequence flows through the network in parallel.

If you got most of these right, you are in good shape to move on to LSTMs, GRUs, attention, and Transformers. If a couple tripped you up, that is normal — go back to the section that covered them. The questions are calibrated to surface gaps, not to grade you.

Frequently Asked Questions

*Q: What is a Recurrent Neural Network (RNN) in simple terms?**

A: An RNN is a neural network designed to process sequences. Unlike a standard feedforward network that treats each input independently, an RNN keeps a hidden state — a running memory — so it can condition each output on everything it has seen so far. This makes RNNs natural for text, speech, time series, and any data where order matters.

**Q: How is an RNN different from a standard (feedforward) neural network?**

A: A feedforward network maps input to output in one pass with no memory. An RNN adds a loop: the hidden state from step t-1 is fed back in at step t. This gives the network temporal context, at the cost of being harder to train and parallelise.

**Q: What is the vanishing gradient problem in RNNs?**

A: When training an RNN over long sequences with backpropagation through time (BPTT), gradients get multiplied across many time steps. If those multiplications shrink the gradient each step, it decays exponentially — becoming too small to update the early weights. The network stops learning long-range dependencies. The exploding gradient problem is the mirror case where gradients grow without bound.

**Q: What’s the difference between LSTM and GRU?**

A: Both are RNN variants that solve the vanishing gradient problem with gating mechanisms. LSTM (Long Short-Term Memory) uses three gates — input, forget, output — and a separate cell state. GRU (Gated Recurrent Unit) uses two gates — reset and update — and merges the cell state with the hidden state. GRU is simpler and faster; LSTM often has a slight accuracy edge on long sequences. In practice, both have been largely superseded by Transformers for NLP.

**Q: What is backpropagation through time (BPTT)?**

A: BPTT is how RNNs are trained. The network is “unrolled” across time steps into an equivalent deep feedforward network, and standard backpropagation is applied. The gradients flow backward through time as well as through layers, which is why the vanishing gradient problem appears.

**Q: When should I use an RNN vs a Transformer (Published in Google Brain on June 12, 2017) ?**

A: Use a Transformer for most NLP and long-sequence tasks — they parallelise better, handle longer context, and have far more pretrained models available. Use an RNN (especially LSTM or GRU) when you have very small data, strict memory or latency budgets (real-time signal processing, embedded devices), or sequential data with strong local-time structure (some time-series, control systems). For new NLP work, a Transformer is almost always the right default.

**Q:Will RNNs still be useful in 2020 and onwards or obsolete?**

A: Still useful, not obsolete. RNNs remain the best choice for streaming signal processing with tight latency constraints, for time-series forecasting with limited data, and for educational understanding of sequence modelling. They are not the right choice for modern language tasks — Transformers took over that space after “Attention Is All You Need” in 2017.

**Q: What are the main real-world applications of RNNs?**

A: Language modelling (historically), machine translation (historically), speech recognition, handwriting recognition, time-series forecasting (finance, energy, sensors), music generation, video activity recognition, and any control or signal-processing task where a small, fast, streaming model is preferable to a large Transformer.

**Q: Do I need math to understand RNNs?**

A: To use an RNN from a library, no — PyTorch or TensorFlow handle the math. To debug or design an RNN, yes — you need to understand the hidden-state recurrence (h_t = f(W·h_{t-1} + U·x_t + b)), the chain rule for BPTT, and why exponential gradient decay happens over time steps. This post covers both.

**Q: What should I learn after RNNs?**

A: In this order: (1) LSTM and GRU variants in detail, (2) sequence-to-sequence models with attention, (3) the Transformer architecture (encoder, decoder, self-attention), (4) pretrained models (BERT, GPT family, T5), (5) fine-tuning and prompt engineering. RNNs are the foundation; Transformers are where modern NLP lives.

Conclusion – I particularly think that getting to know the types of machine learning algorithms actually helps to see a somewhat clear picture. The answer to the question “What machine learning algorithm should I use?” is always “It depends.” It depends on the size, quality, and nature of the data. Also, what is the objective/motive of data torturing? As more we torture data more useful information comes out. It depends on how the math of the algorithm was translated into instructions for the computer you are using. In short, understanding the nuances of various algorithms enables better decision-making in selecting the most suitable approach for a given task.

—

Points to Note:

All credits if any remain on the original contributor only. We have covered all basics around Recurrent Neural Networks. RNNs are all about modelling units in sequence. The perfect support for Natural Language Processing – NLP tasks. Though often such tasks struggle to find the best companion between CNN’s and RNNs’ algorithms to look for information.

Books + Other readings Referred

- Research through open internet, news portals, white papers and imparted knowledge via live conferences & lectures.

- Lab and hands-on experience of @AILabPage (Self-taught learners group) members.

- This useful pdf on NLP parsing with Recursive NN.

- Amazing information in this pdf as well.

Feedback & Further Question

Do you have any questions about Deep Learning or Machine Learning? Leave a comment or ask your question via email. Will try my best to answer it.

==================== About the Author ===================

Read about Author at : About Me

Thank you all, for spending your time reading this post. Please share your opinion / comments / critics / agreements or disagreement. Remark for more details about posts, subjects and relevance please read the disclaimer.

FacebookPage ContactMe Twitter

====================================================

[…] Recurrent Neural Networks […]

[…] Recurrent Neural Networks […]

I want to say thanks to you. I have bookmark your site for future updates. ExcelR Data Scientist Course Pune

[…] Convolutional Neural Networks applications solve many unsolved problems that could remain unsolved without convolutional neural networks with many layers, include high calibres AI systems such as AI-based robots, virtual assistants, and self-driving cars. Other common applications where CNNs are used as mentioned above like emotion recognition and estimating age/gender etc The best-known models are convolutional neural networks and recurrent neural networks […]

[…] There are some specialized versions also available. Such as convolution neural networks and recurrent neural networks. These addresses special problem domains. Two of the best use cases for Deep Learning which are […]

[…] https://vinodsblog.com/2019/01/07/deep-learning-introduction-to-recurrent-neural-networks/ […]

Very interesting to read this article.I would like to thank you for the efforts you had made for writing this awesome article. This article inspired me to read more. keep it up.

Correlation vs Covariance

Simple linear regression

data science interview questions

Very interesting to read this article.I would like to thank you for the efforts you had made for writing this awesome article. This article inspired me to read more. keep it up.

Correlation vs Covariance

Simple linear regression

data science interview questions

Amazing Article ! I would like to thank you for the efforts you had made for writing this awesome article. This article inspired me to read more. keep it up.

Simple Linear Regression

Correlation vs covariance

data science interview questions

KNN Algorithm

very well explained .I would like to thank you for the efforts you had made for writing this awesome article. This article inspired me to read more. keep it up.

Simple Linear Regression

Correlation vs covariance

data science interview questions

KNN Algorithm

Logistic Regression explained

This Was An Amazing ! I Haven’t Seen This Type of Blog Ever ! Thankyou For Sharing, data science course in hyderabad with placements

[…] Deep Learning – Introduction to Recurrent Neural Networks […]

Such a very useful information!Thanks for sharing this useful information with us. Really great effort.

data scientist courses aurangabad

Informative blog

Data science course in pune

Amazing Article! I would like to thank you for the efforts you had made for writing this awesome article. This article inspired me to read more. keep it up.

Data science course in pune

Very informative message! There is so much information here that can help any business start a successful social media campaign!

With the advancement in technology, users are now expecting a web app.

data science course in pondicherry

Thanks for sharing good information I read this post I really like this article.

artificial intelligence training in Hyderabad

In this article, you will read the basic details of both of these languages, and then it will be easy for you to make a decision that is R is easier to learn than Python.data science course in jalandhar

Get dual certification from IBM and UTM, Malaysia with a single Data Science Course at 360DigiTMG. Enroll now for a successful tomorrow!

data science course fees in hyderabad

When one thinks about data science, there might be word machine learning comes into the mind.data science course in nashik

This is additionally a generally excellent post which I truly delighted in perusing.

It isn’t each day that I have the likelihood to see something like this..

data science course in pune

360DigiTMG provides exceptional training in the Data Science course with placements. Learn the strategies and techniques from the best industry experts and kick start your career.data analytics course in jalandhar

All things considered I read it yesterday yet I had a few musings about it and today I needed to peruse it

again in light of the fact that it is very elegantly composed.

full stack data scientist course in Malaysia

I am searching for and I love to post a remark that “The substance of your post is wonderful” Great work!

data analytics course in pune

Python is more popular than R, which is why most organizations use it. R’s functionality is beneficial. That is why the companies prefer it for the beginning of their projects.data science training in dombivli

This post is very simple to read and appreciate without leaving any details out. Great work!data science course in chennai

This is the first time I visit here. I found such a large number of engaging stuff in your blog, particularly its conversation. From the huge amounts of remarks on your articles,

I surmise I am by all accounts not the only one having all the recreation here! Keep doing awesome.

I have been important to compose something like this on my site and you have given me a thought.

Cool you write, the information is very good and interesting, I’ll give you a link to my site.

data analytics course in Hyderabad.

Hi, I have read a lot from this blog thank you for sharing this information. We provide all the essential topics in Data Science Course In Chennai like, Full stack Developer, Python, AI and Machine Learning, Tableau, etc. for more information just log in to our website

Data science course in chennai

Hi, I have read a lot from this blog thank you for sharing this information. We provide all the essential topics in Data Science Course In Dehradun like, Full stack Developer, Python, AI and Machine Learning, Tableau, etc. for more information just log in to our website

Data science course in Dehradun

Hi, I have read a lot from this blog thank you for sharing this information. We provide all the essential topics in Data Science Course In Bhopal like, Full stack Developer, Python, AI and Machine Learning, Tableau, etc. for more information just log in to our website

Data science course in bhopal

[…] Recurrent Neural Networks […]

If you want to know more about data science, certification courses available in this field and placement opportunities upon completion of the courses, this post is the right place to be. It takes you through all of 360digiTMG’s courses in detail and guides you to pick the one that is most suitable for you.data science training certification in hyderabad

[…] Recurrent Neural Networks […]

[…] recurrent neural networks models comes very handy to translate language. Through interactive exercises and using […]

[…] Deep Learning – Introduction to Recurrent Neural Networks […]

[…] Deep Learning – Introduction to Recurrent Neural Networks […]

[…] Recurrent Neural Networks […]

I recommend everyone to read this blog, as it contains some of the best ever content you will find on data science. The best part is that the writer has presented the information in an attractive and engaging manner. Every line gives you something new to learn, and this itself tells volumes about the quality of the information presented here.

[…] Recurrent Neural Networks (RNN) – A neural network to understand the context in speech, text or music. The RNN allows information to loop through the network, […]

[…] Recurrent Unit – The GRU is a variant of the Recurrent Neural Network (RNN) architecture designed to overcome the challenges posed by the vanishing gradient […]

[…] in the past, certain deep learning architectures, such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs), have taken precedence over others because of their effectiveness in various applications, as […]

I enjoyed reading about the latest trends and advancements in the field of data science in this post.

data science institutes in hyderabad

[…] Deep Learning – Introduction to Recurrent Neural Networks […]

This captivating blog on “Deep Learning – Introduction to Recurrent Neural Networks” is a commendable exploration of a complex topic, offering clarity and depth to readers. The author’s ability to demystify recurrent neural networks showcases a rare talent for making intricate concepts accessible and engaging. The insightful analysis and clear explanations make this blog an invaluable resource for anyone diving into the world of deep learning.

I appreciate you offering such lovely items. I learned something from your blog. Continue sharing. Your insightful blog post on ‘Deep Learning – Introduction to Recurrent Neural Networks’ expertly navigates the complexities of this cutting-edge technology, providing a clear and engaging entry point for both novices and seasoned enthusiasts. Your ability to distil intricate concepts into digestible insights truly makes this a valuable resource for anyone delving into the world of deep learning.

I want to say thanks to you. I have bookmark your site for future updates. Ataşehir Çekici

“Deep Learning – Introduction to Recurrent Neural Networks” offers a compelling journey into the intricate world of neural networks, specifically focusing on the versatile domain of Recurrent Neural Networks (RNNs). The content provides a clear and insightful introduction, unravelling the complexities of RNNs with clarity and precision. It adeptly navigates through the foundational concepts, making this advanced topic accessible to learners at all levels.

The appreciation extends to the seamless blend of theoretical insights and practical applications, fostering a comprehensive understanding. Overall, this resource stands as an invaluable guide, bridging the gap between theory and implementation in the realm of deep learning.

In short, I enjoyed reading about the latest trends and advancements in the field of Recurrent Neural Networks in this post.

Thank you for sharing this information. I wanted to mention that I’ve visited your website and found it to be both intriguing and informative. I’m looking forward to exploring more of your posts. Allow me to share link for my personal promotion here. Data Science Course in Chennai

The explanation is very clear, and the presentation is neat. Thank you for sharing this article. Recurrent Neural Networks (RNNs) are a type of artificial neural network designed to process sequences of data. They work especially well for jobs requiring sequences, such as time series data, voice, natural language, and other activities. RNN works on the principle of saving the output of a particular layer and feeding this back to the input in order to predict the output of the layer. please allow me to promote my company, Have a look at our training

Artificial Intelligence Course in Mumbai

Thank you for publishing this blog. RNNs are a type of neural network that can be used to model sequence data. RNNs, which are formed from feedforward networks, are similar to human brains in their behaviour. Simply said, recurrent neural networks can anticipate sequential data in a way that other algorithms can’t.