Generative Adversarial Networks (GANs): A combination of two neural networks, which is a very effective generative model network, works simply opposite to others. GANs are truly fascinating architecture that I had the privilege to experiment with hands-on at AILabPage.

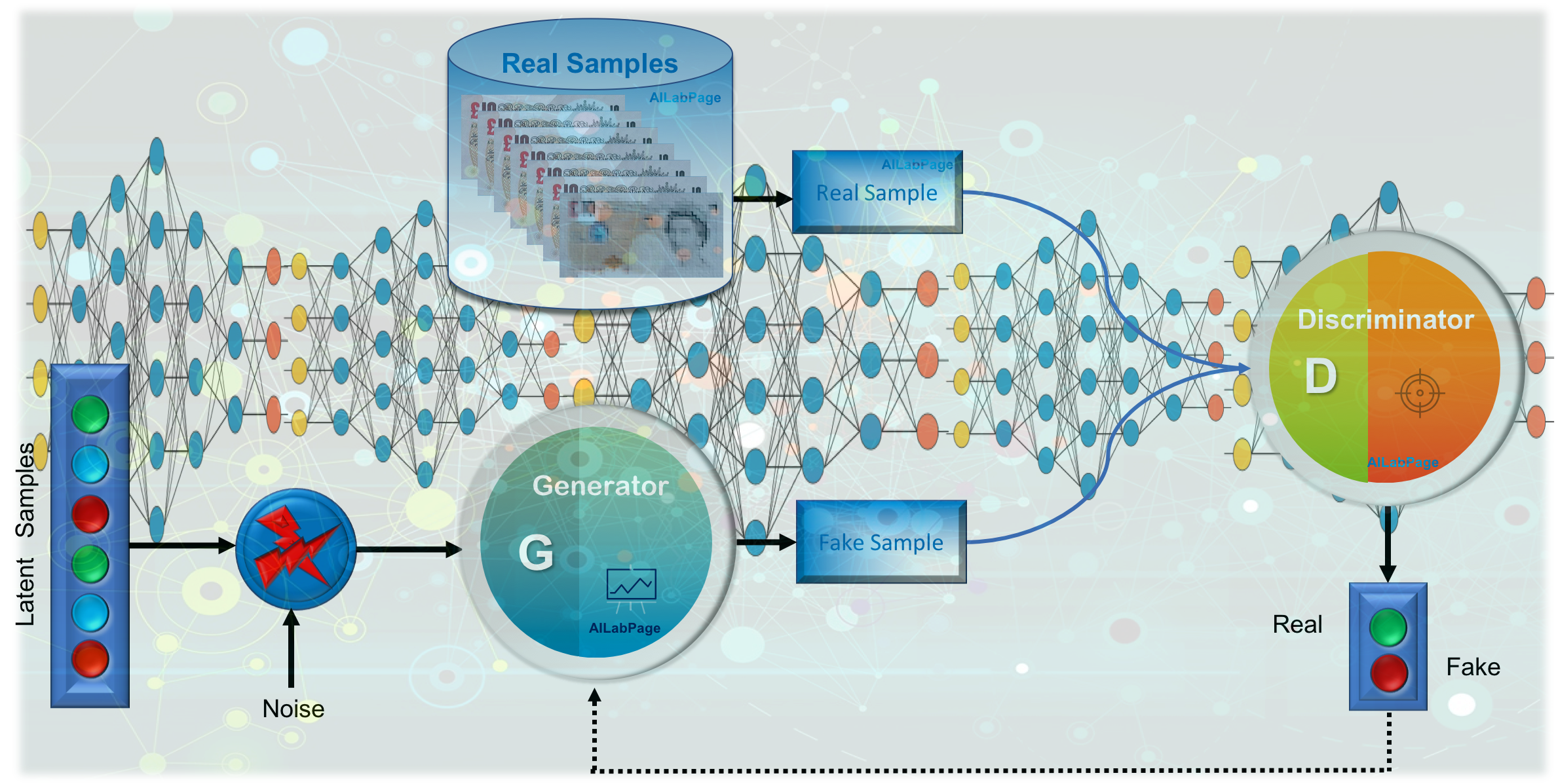

Unlike traditional neural networks that we use to map complex input-output relationships, GANs operate on a more adversarial mechanism. In essence, they consist of two neural networks—the Generator and the Discriminator—locked in a competitive loop. From my personal experience working with GANs in lab environments, this interplay feels more like a game, where the Generator network tries to “trick” the Discriminator by generating data that mimics real-world samples, while the Discriminator continuously learns to differentiate between real and generated data.

At AILabPage, it was thrilling to observe how the Generator starts off producing almost nonsensical outputs—often just random noise—but over multiple training iterations, it gets better at creating increasingly realistic outputs, thanks to the adversarial feedback loop. This real-time transformation highlights the power of GANs in producing high-quality generative models, especially in fields like image synthesis or data augmentation.

GANs are a specific technique and not a broad architectural paradigm but rather a specific framework for training generative models. GANs are a subset of generative models, which themselves are a broader category. GANs are not a standalone architectural paradigm like CNNs or RNNs.

GANs, unlike conventional models, don’t follow the typical feed-forward approach. Instead, their adversarial nature provides a dynamic feedback loop that feels almost human-like in its learning, constantly refining and improving based on the challenge set by the opposing network. This hands-on journey with GANs truly changed my perspective on generative modeling, teaching me the profound impact of unsupervised learning and model optimization.

Unpacking Generative Adversarial Networks

Introduced by Ian Goodfellow and his team back in 2014, GANs are still a relatively “young” yet groundbreaking member of the Deep Neural Network family, standing out because of their unique unsupervised learning mechanism. It’s fascinating how GANs, through this rivalry, push both networks to improve, making them highly effective for tasks that involve generating new, previously unseen data. A Generative Adversarial Network is simply another type of neural network architecture used for generative models. In simple words creating samples, similar but do not have some unique differences.

As per the definition of the word “Adversarial” from the Internet, “Involving two people or two sides who oppose each other, i.e., adversary procedures, An adversarial relationship is an adversarial system of justice, with prosecution and defence opposing each other. I wonder why there is a need for the system to generate almost real-looking images when they can be misused more than correctly used.

GANs are a class of algorithms used in an unsupervised learning environment. As the name suggests, they are called adversarial networks because they are made up of two competing neural networks. Both networks compete with each other to achieve a zero-sum game. Both neural networks are assigned different job roles, i.e., they compete with each other.

- Generator: The Generator neural network is responsible for creating new data instances, learning from existing data to generate novel outputs in various domains like images, text, and music.

- Discriminator: The Discriminator neural network evaluates the outputs generated by the Generator, distinguishing between real and generated data, providing feedback to improve the Generator’s performance.

The cycle continues to obtain accurate or near-perfect results. To understand GANs, let’s take a scenario from my home: My son is a much better player in chess than I am, and if I want to improve, I should play with him. Every time I lose, I learn from my mistakes, and through constant practice, my skills improve. Similarly, in the GAN process, the Generator and Discriminator constantly learn from each other, refining their abilities with each cycle.

If you’re still confused, it’s okay! Let me give a real-world example to clarify further. Imagine a painter trying to create a masterpiece. The painter works hard, but the judge—who has a keen eye for art—critiques each piece. Over time, the painter learns from the critiques, gradually improving their work. The painter (like the Generator) tries to create the best possible art, while the judge (like the Discriminator) provides feedback that pushes the artist to improve with each attempt.

The Power of GANs in Creative AI

In my realm of expertise and personal pursuits, which encompass Theoretical Physics, Deep Learning, Photography, and AI, I hold a profound affinity for GANs and perhaps allocate around 20% of my attention to CNNs.

The impact of GANs on my endeavours has been truly remarkable, elevating my cognitive capacities and thought processes, and leading to significant improvements across various facets of my work domains.

- Data Generation: GNNs specialize in creating novel and realistic data instances across various domains, including images, text, and music, by learning and mimicking patterns from existing data.

- Variety of Architectures: GNNs encompass various architectures like Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and Transformers, each tailored for specific generative tasks, such as image synthesis, style transfer, and text generation.

- Creative Content: GNNs empower creative applications by producing original artwork, generating realistic images, composing music, and even crafting engaging storytelling through text generation, showcasing their potential for artistic expression.

- Data Augmentation: GNNs contribute to data augmentation in machine learning by generating additional training data, thereby enhancing model robustness and performance, particularly beneficial in scenarios with limited labeled data.

- Future Innovations: As a forefront technology, GNNs continue to evolve, paving the way for advancements in AI-driven content creation, virtual reality experiences, and personalized recommendations, reshaping how we interact with and consume digital content.

GNNs continue to evolve, and their impact on various industries and domains is poised to be profound, unlocking new avenues for creativity and advancing the boundaries of artificial intelligence. The journey of GNNs is a testament to the power of machine learning in unleashing my ingenuity and pushing the boundaries of what’s possible in the digital realm.

Adversarial training “The most interesting idea in the last 10 years in the field of Machine learning”. Sir Yann LeCun, Facebook’s AI research director

Looking into GANs Realm

In the ethereal realm of GANs, the analogy of above example mirrors the synergy between generator and discriminator, each refining the other through adversarial collaboration. Just as in chess, where each move refines your understanding and strategy, GANs harness adversarial forces to sculpt data into astonishingly authentic creations.

Your journey from apprentice to adversary mirrors the profound metamorphosis in GANs, shaping the contours of innovation through the crucible of competition and enlightenment.

GANs Architecture

Generative Adversarial Networks (GANs) consist of two neural networks, a Generator and a Discriminator, competing in a zero-sum game. The Generator creates synthetic data, while the Discriminator evaluates its authenticity against real data. This adversarial process refines both networks, leading to high-quality data generation. GANs have revolutionized applications like image synthesis, deepfake creation, and data augmentation, making them essential in AI-driven creativity and generative modeling.

| # | Action | Description (if needed) |

|---|---|---|

| 1 | Sample random noise from the latent space. | Initial input to the Generator. |

| 2 | Pass the noise vector to the Generator. | Feeding noise to the model. |

| 3 | Generate fake data using the Generator. | Generator produces synthetic data. |

| 4 | Pass the fake data to the Discriminator. | Discriminator evaluates the fake data. |

| 5 | Pass real data to the Discriminator. | Real data is also input to the Discriminator. |

| 6 | Discriminator predicts whether the input is real or fake. | Classifies real vs. fake data. |

| 7 | Compute the Discriminator loss based on its predictions. | Loss function calculation. |

| 8 | Backpropagate the Discriminator loss. | Update gradients for Discriminator. |

| 9 | Perform gradient descent for the Discriminator. | Optimization step for Discriminator. |

| 10 | Update the Discriminator’s weights. | Apply weight changes. |

| 11 | Compute the Generator loss based on the Discriminator’s output. | Generator’s objective function. |

| 12 | Backpropagate the Generator loss. | Update gradients for Generator. |

| 13 | Perform gradient descent for the Generator. | Optimization step for Generator. |

| 14 | Update the Generator’s weights. | Apply weight changes. |

| 15 | Update the Generator’s parameters. | Refine Generator’s model parameters. |

| 16 | Update the Discriminator’s parameters. | Refine Discriminator’s model parameters. |

| 17 | Output the generated data from the Generator. | Final synthetic data output. |

| 18 | End the training process. | Completion of the GAN training cycle. |

GANs leverage a Generator-Discriminator architecture to create realistic synthetic data through adversarial training. This approach enhances applications in AI, from image generation to deepfake creation and data augmentation, driving advancements in generative modeling.

The Generator

The Generator in GANs is the creative engine, crafting data that mimics real-world inputs. It starts with random noise and, through training, refines its output to “fool” the Discriminator by producing increasingly realistic samples.

Think of it as the artist in this adversarial game, constantly iterating to improve.

- Purpose: The generator creates synthetic data (e.g., images, text, or audio) from random noise (usually a latent vector sampled from a probability distribution like a Gaussian).

- Input: A random noise vector (latent space).

- Output: Synthetic data that mimics the real data distribution.

- Training Goal: To produce data so realistic that the discriminator cannot distinguish it from real data.

- Architecture: Typically a neural network (e.g., convolutional neural networks (CNNs) for images or transformers for text).

- Creative Engine: The Generator is the heart of creativity in GANs, generating new, realistic data instances by transforming random noise into meaningful outputs, mimicking real-world inputs in various domains.

- Continuous Refinement: Through iterative training, the Generator constantly refines its outputs to produce more realistic samples, evolving over time to “fool” the Discriminator, improving with each cycle.

The generator network’s job is to create random synthetic outputs, i.e., images, pictures, etc. This neural network is called the Generator because it generates new data instances.

The Discriminator

The Discriminator, on the other hand, acts as the critic. Its job is to differentiate between real and generated data. It provides feedback to the Generator, helping it refine its outputs by identifying whether the data is genuine or artificial.

The discriminator tries to identify and make efforts to inform whether the input is real or fake. The discriminator evaluates work for the first neural net for authenticity.

- Purpose: The discriminator acts as a classifier that distinguishes between real data (from the training set) and fake data (generated by the generator).

- Input: Real data or synthetic data from the generator.

- Output: A probability score (e.g., 0 for fake, 1 for real).

- Training Goal: To correctly classify real and fake data.

- Architecture: Also a neural network, often similar to the generator but optimized for classification.

- Critical Evaluator: The Discriminator acts as the critic, distinguishing between real and generated data by evaluating authenticity, providing the Generator with valuable feedback for further refinement.

- Feedback Loop: By identifying whether data is genuine or artificial, the Discriminator helps the Generator improve over time, strengthening the adversarial training process for more accurate outputs.

In the GAN realm, the Generator and Discriminator work together in an adversarial collaboration, refining each other. The Generator creates realistic data, while the Discriminator critiques it, improving results over time.

How do GANs Work?

As mentioned and described in the image above, GANs consist of two neural networks, i.e., a generator that generates a fake image of our currency note example and a discriminator that classifies it as real or fake.

The generator is like an artist trying to create masterpieces that fit into the real world—it takes input and maps it to the desired data space, crafting outputs that (hopefully) look legit. Meanwhile, the discriminator plays the role of a tough art critic, scrutinizing each piece and deciding whether it’s real or just a clever fake.

These two go head-to-head in an AI showdown, constantly pushing each other to get better. Over time, the generator gets so good that even the sharpest-eyed discriminator starts second-guessing itself—welcome to the magic of GANs!

Adversarial Training Process

- Competition: The generator and discriminator are trained simultaneously in a minimax game, where the generator tries to fool the discriminator, and the discriminator tries to correctly classify real vs. fake data.

- Loss Functions:

- Generator Loss: Measures how well the generator fools the discriminator (e.g., binary cross-entropy loss for fake data classified as real).

- Discriminator Loss: Measures how well the discriminator distinguishes real from fake data.

- Optimization: Both networks are optimized using gradient descent, but their objectives are adversarial (one minimizes loss, the other maximizes it).

The whole idea of the game is to face off with each other (two neural networks) and get better at each attempt. The end result is expected, as our generator network produces realistic or almost realistic outputs. The discriminator gets fully trained to perform its job, which is to correctly classify the input data as either real or fake. This informs the weights used to get updated and marks the probabilities as

- Maximised – Any real data input is now classified as “Belongs to the real dataset”

- Minimised – Any fake data input is now classified as “Belongs to the real dataset”

Generators get trained to fool the discriminator by generating data as close to real as possible. So at this step, the generator’s weights used to get updated and marked the probabilities as

- Maximised – Any fake data input is now classified as “Belongs to the real dataset”

At the end of several training iterations, a conclusion is drawn about whether the Generator and Discriminator have reached a point where no further improvement can be made.

Decoding GANs: AI’s Fake-Making Factory

Ever wondered how AI conjures up hyper-realistic faces, art, or even deepfakes? Enter Generative Adversarial Networks (GANs)—the ultimate AI battle where a generator creates fake data, and a discriminator sniffs out fraud. Like a con artist vs. detective game, they sharpen each other, making

GANs are AI’s creative powerhouses, generating realistic data through a battle between two networks: a faker (generator) and a detective (discriminator). This diagram walks through the process—from randomness to mastery—covering training, challenges, and how AI learns to control outputs like a pro.

Latent Space

- Purpose: The latent space is a low-dimensional representation of the data, often a random noise vector, from which the generator creates synthetic data.

- Characteristics: The latent space encodes meaningful features of the data distribution, and manipulating it can control the output of the generator (e.g., generating specific types of images).

- Sampling: Random noise vectors are sampled from a distribution (e.g., Gaussian) to feed into the generator.

This is the time when the generator generates (fake) realistic synthetic data and the discriminator fails to differentiate between fake and real. So with the above two scenarios, it’s clear that during training, loss functions get optimized in opposite directions. A similar situation to our example where both parties feel they are at their best.

Loss Functions and Training Dynamics

- Minimax Game: The training process is framed as a minimax optimization problem, where the generator minimizes the discriminator’s ability to distinguish real from fake, and the discriminator maximizes its classification accuracy.

- Common Loss Functions:

- Binary Cross-Entropy Loss: Used for standard GANs.

- Wasserstein Loss: Used in Wasserstein GANs (WGANs) to improve training stability.

- Least Squares Loss: Used in LSGANs to address vanishing gradients.

- Training Challenges: GANs are notoriously difficult to train due to issues like mode collapse (generator produces limited varieties of outputs) and non-convergence.

Conditional GANs (cGANs)

- Additional Component: Conditional GANs introduce a conditional vector (e.g., class labels or text descriptions) to guide the generator in producing specific types of outputs.

- Use Case: Useful for tasks like generating images of a specific class or style.

These components work together to enable GANs to generate high-quality, realistic data. Still, they also introduce challenges like training instability and mode collapse, which require careful tuning and advanced techniques to address.

Steps Involved in Train GANs

Training Generative Adversarial Networks (GANs) involves a structured process where two neural networks, the generator and the discriminator, engage in a feedback loop to improve their performance iteratively.

Step 1: Clarifying the Goal

First, get crystal clear on your goal. Are you generating fake videos from live footage or creating artificial text? Defining the objective upfront is crucial, as it guides every step of the process. Without this clarity, your journey won’t yield meaningful results.

Step 2: Architecture Setup

Choosing the right model architecture is vital. Will you be using Convolutional Neural Networks (CNNs) for image-based data or a simple Multilayer Perceptron (MLP) for text generation? The architecture should be driven by the problem you’re solving.

Step 3: Training the Discriminator with Real Data

For training the Discriminator, you’ll feed it real data—in this case, authentic currency images. Using CNNs, train it to confidently identify what’s real, setting a solid baseline for differentiation between real and fake.

Step 4: Training the Discriminator with Fake Data

Now, collect the data generated by your Generator. Feed these “fake” samples to the Discriminator and teach it to correctly identify them as artificial. This is key for setting up the adversarial balance.

Step 5: Training the Generator with Discriminator Feedback

With a trained Discriminator, you can use its feedback as an objective function to guide your Generator’s learning. The goal is for the Generator to gradually learn how to deceive the Discriminator with increasingly realistic data.

Step 6: Iteration and Refinement

Repeat steps 3 to 5 iteratively. This cyclical process helps the Generator and Discriminator improve in tandem. Each loop brings the model closer to creating realistic outputs.

Step 7: Performance Check

Now, it’s time to evaluate the generated data manually. Take a close look at the samples and check whether they meet the expected quality. This step is critical to ensure you’re heading in the right direction.

Step 8: Decision to Continue or Stop

Based on your performance check, decide whether to continue training or stop. If the generated data is not up to your expectations, continue fine-tuning. If it’s close to perfect, it might be time to finalize your model.

The training of GANs culminates in a sophisticated balance between the generator creating realistic outputs and the discriminator effectively identifying those outputs, fostering continual improvement in generative capabilities. Over multiple iterations, the generator learns to create data that closely resembles the training dataset, while the discriminator hones its ability to distinguish real from generated samples.

This dynamic interplay not only enhances the quality of the generated data but also pushes the boundaries of creativity in applications such as image synthesis, video generation, and even music composition. Through this iterative learning process, GANs are positioned at the forefront of generative modeling in machine learning.

Example

Remember the chess scenario from my home—a game between my son, Krishna, and me. If I play continuously against him, my game is bound to improve. Behind the scenes, I analyze my mistakes and his winning strategies. To stand a chance in the next game, I must devise a strategy that can outmaneuver him.

Let’s term “Two Neural Networks“, that compete as advisors and opponents in GANs.

I am the ‘Generator,’ and my son is the ‘Discriminator.’ Right now, as a Grandmaster, he is far more powerful than me in chess. But if I reach a level where he can no longer beat me, I will have outmatched the Discriminator—becoming the ultimate strategist.

From the above example, it’s very clear that In the realm of Generative Adversarial Networks (GANs), envision the dynamic interplay of two neural networks cast as opponents and advisors.

Analysing our Example

I assume the role of the “generator,” while my son embodies the “discriminator,” possessing formidable prowess in the chess arena. An ongoing chess match between the two of us becomes a crucible for skill enhancement. Through persistent engagement, my strategic acumen evolves, informed by my son’s astute judgments. Let me summarise my whole exp in below seven bullets,

| # | Concept | Explanation | GAN Analogy |

|---|---|---|---|

| 1 | Continuous Learning for Improvement | – I refine my strategy with every match, learning from past defeats. – It feels like a never-ending comedy show where I keep getting schooled. | – Just like GANs, where the Discriminator constantly pushes the Generator to improve. – The Generator keeps refining itself to fool the Discriminator better. |

| 2 | Strategy Based on Adversarial Collaboration | – As the “Generator,” I must outwit my “Discriminator” (my son). – Each game serves as an opportunity to test and refine my tactics. | – GANs improve through adversarial training, where both models compete and grow. – The Discriminator forces the Generator to become more sophisticated. |

| 3 | Post-Game Reflection as Growth | – Reviewing my mistakes helps me spot weaknesses in my gameplay. – Understanding my son’s moves allows me to anticipate and counter them. | – The Discriminator’s feedback helps the Generator adjust and improve its outputs. – Each cycle of learning makes the Generator more effective. |

| 4 | Learning from Mistakes | – Every misstep highlights areas where I need improvement. – I tweak my strategy based on what worked and what failed. | – GANs use failure as a learning mechanism to generate more realistic data. – The Generator iterates until it successfully fools the Discriminator. |

| 5 | Expanding Strategic Possibilities | – The more I learn, the wider my strategic playbook becomes. – I experiment with new tactics, adding unpredictability to my game. | – The Generator in GANs explores diverse ways to create realistic data. – Innovation happens when the Generator steps beyond obvious patterns. |

| 6 | Beyond Emulation to Innovation | – I move from simply mimicking my son’s tactics to creating my own. – My gameplay becomes more creative and less predictable. | – A well-trained Generator learns to create unique, lifelike outputs. – True success happens when the Generator generates something truly novel. |

| 7 | Creating a Multidimensional Approach | – I integrate all my learnings into a well-rounded, adaptable strategy. – My gameplay evolves beyond simple tricks, making me a formidable opponent. | – A strong Generator doesn’t rely on a single trick but adapts dynamically. – It disrupts predictable patterns, achieving higher accuracy in deception. |

The fusion of my commitment, adaptive learning, physics and mathematical section of my brain, and strategic ingenuity weaves a narrative of growth. With every move, I get an inch closer to the horizon of triumph, where the scales tip in my favour. The embodiment of perseverance, I prepare to challenge my son’s supremacy armed with a refined strategy, unfazed by prior setbacks

Enhancing Chess Game Strategy Through GANs

Training a GAN inspired by the chess analogy involves iterative steps to improve the “generator’s” game against the powerful “discriminator.” Please note that I have mentioned all 10 steps below, which I have used in my above example, but I have not defined all of them in code or in detail for free subscriptions. A sample code is attached below in picture format. You can use the below steps and sample code to build your own end-to-end game plan; trust me, it’s very easy. Here are 10 steps to train the GAN in this context.

- Initialization: Initialize the “generator” and “discriminator” networks with their respective parameters and architectures.

- Generate Moves: Let the “generator” produce a set of chess moves as its output. These moves represent the “generator’s” attempt at improving its game.

- Evaluate Moves: Use the “discriminator” to evaluate the quality of the generated moves. The “discriminator” acts as a judge, assigning scores to the moves based on their perceived quality.

- Feedback Loop: Based on the “discriminator’s” evaluations, the “generator” receives feedback on the quality of its generated moves. The feedback could be in the form of scores or labels indicating good or bad moves.

- Strategy Update: The “generator” adjusts its strategy based on the feedback received from the “discriminator.” It learns from its mistakes and attempts to generate better moves in response.

- Analysis of Mistakes: Analyze the specific mistakes made by the “generator” during the move generation process. Identify patterns or weaknesses that the “discriminator” detected.

- Improve Strategy: The “generator” strategizes to overcome the detected weaknesses. It aims to create moves that exploit the “discriminator’s” identified areas of strength.

- Re-Generate Moves: With the updated strategy, the “generator” produces a new set of chess moves, incorporating the lessons learned from the previous feedback.

- Continuous Iteration: Repeat steps 3 to 8 for multiple iterations. The “generator” progressively refines its strategy by learning from its own mistakes and the “discriminator’s” evaluations.

- Convergence: Over successive iterations, the “generator’s” moves become more sophisticated and challenging for the “discriminator” to evaluate. The goal is to achieve a convergence where the “generator” produces high-quality moves that outwit the “discriminator.”

Remember that the above steps and below sample code template provide a conceptual framework for training the GAN based on my chess game analogy. The actual implementation of neural networks, move generation, evaluation, and strategy updates would involve translating these concepts into code using appropriate algorithms and libraries. Additionally, GANs often involve more complex architectures and training techniques, so further research and experimentation would be necessary for a practical implementation.

Code for the Above Example – Chess Game (Me and My Son)

Implementing a GAN in Rust for the scenario for the above example is a bit complex due to the nature of GANs and the chess game analogy. However, here I am documenting a high-level outline of how anyone might structure a simplified version of above concept in Rust

A

The above code is just a glimpse and a simplified outline thus lacks the complete intricacies of a full GAN implementation. In this scenario, the Generator generates chess moves, and the Discriminator evaluates the quality of those moves. The Generator then adjusts its strategy based on the feedback from the Discriminator.

Complexity such as training mechanisms, neural network architectures, and more sophisticated strategies are missing on purpose and not available for a free subscription. Please be noted and study GANs and Rust programming further to build a more comprehensive and functional implementation.

Challenges with GANs

Generative Adversarial Networks have made significant strides in computer vision, offering exciting possibilities for generating realistic images and videos. However, they come with unique challenges that make them harder to train and apply effectively.

| Sr. No. | Challenge | Description | Impact |

|---|---|---|---|

| 1 | Instability and Non-Convergence | GANs often fail to converge, causing instability in training. | Model oscillation and poor generalization. |

| 2 | Overfitting | Due to instability, GANs may overfit the data and produce poor results. | Loss of model diversity and inability to generalize. |

| 3 | Training Complexity | Using backpropagation for two networks makes training and choosing objectives difficult. | Difficult to fine-tune, affecting learning efficiency. |

| 4 | Mode Collapse | The generator often produces limited variations, leading to repeated outputs. | Lack of diversity in generated data. |

| 5 | Hyperparameter Sensitivity | GANs are highly sensitive to hyperparameter settings, affecting performance. | Difficulty in finding optimal settings, slowing progress. |

| 6 | Discriminator Overtraining | The discriminator learns too quickly, causing the generator to lose gradient. | Prevents the generator from improving, leading to failure. |

| 7 | Lack of Objective Metrics | GANs face challenges in having a clear, objective evaluation metric. | Makes assessing model performance difficult and subjective. |

Training GANs presents significant obstacles, particularly in terms of stability and model convergence. Despite their potential, these issues need careful handling for effective use in fields like computer vision. At AILabPage, we explore these hurdles hands-on, working toward solutions to unlock GANs’ full potential.

Use Cases – Generative Adversarial Networks

Generative Adversarial Networks (GANs) represent a revolutionary approach in machine learning, combining two neural networks—the generator and discriminator—in a unique adversarial framework.

This technology has shown immense potential in various applications, from image synthesis to data augmentation, transforming industries. Generative adversarial networks have several real-life business use cases, few of them are listed below:

| Use Case | Description | Industry Application |

|---|---|---|

| Image Generation and Enhancement | GANs are used to generate realistic images from random noise or low-resolution images (super-resolution). | Fashion, Gaming, Medical Imaging, Satellite Imagery |

| Data Augmentation | Synthetic data generation using GANs helps create more training data where real-world data is scarce. | Healthcare, Autonomous Driving, Robotics, Machine Learning Models |

| Video Generation | GANs can generate predictive or interpolated frames for more fluid and realistic video transitions. | Animation, Virtual Reality, Video Compression |

| Text-to-Image Synthesis | GANs convert text descriptions into high-quality images, capturing detailed representations. | Art and Design, Marketing, E-commerce |

| 3D Object Generation | GANs create or improve existing 3D models. | Game Development, Architecture, Augmented Reality (AR) |

| Detection of Counterfeit Currency | GANs can help identify fake currency by generating and analyzing counterfeit patterns. | Banking, Finance, Security |

| Creating Fake Artwork Samples | GANs can generate new artwork mimicking famous artists or styles. | Art Creation, Museum Curation, Authentication |

| Simulation & Planning with Time-Series | GANs simulate predictive time-series data used in video/audio scenarios. | Finance, Weather Forecasting, AI Simulations |

GANs are powerful tools that generate new data and enhance existing datasets by mimicking real-world distributions. Their versatility allows applications across multiple sectors, such as art creation, video generation, and counterfeit detection, showcasing their transformative impact on technology.

Mastering GANs – AILabPage Prospective

Generative Adversarial Networks (GANs) are a game-changer in the realm of generative models, where two neural networks, the Generator and the Discriminator, are pitted against each other in a zero-sum game.

At AILabPage, we’ve seen first-hand how GANs redefine creativity by using a feedback loop to push both models to their limits, producing data so realistic that it blurs the line between synthetic and actual input. Through training iterations, the Generator refines its samples, while the Discriminator becomes more adept at detecting fake data, creating a constant cycle of improvement.

- Dual Neural Network Interaction: GANs consist of a Generator that creates synthetic data and a Discriminator that evaluates its authenticity, driving the model to improve iteratively.

- Breakthrough in Unsupervised Learning: By pitting the Generator and Discriminator against each other, GANs push deep learning boundaries, excelling in image generation, data augmentation, and creative AI innovations.

Learn From the Creator – Generative Adversarial Networks

Lets hear directly from the creators, I feel its a blessings for me to watch this video again and again

Must watch videos

This adversarial dynamic leads to highly accurate results across domains like image synthesis, text generation, and even fraud detection in FinTech. GANs have revolutionized generative modeling, thanks to their unsupervised learning architecture, showing immense promise in areas that demand high fidelity and precision.

Conclusion: In this post, we have learned some high-level basics of GANs—Generative Adversarial Networks. GANs are a recent development effort but look very promising and effective for many real-life business use cases. In the intricate fusion of Generative Adversarial Networks and the chessboard analogy, a symphony of innovation unfolds. This dual narrative resonates with the boundless potential of adversarial collaboration, illuminating the transformative power of synergy and creativity in both artificial intelligence and the human pursuit of mastery. As we continue to push the boundaries of this technology, its applications will only grow, reshaping industries and revolutionizing how we approach problem-solving.

—

Points to Note:

All credits if any remains on the original contributor only. We have covered all basics around the Generative Neural Network. Though often such tasks struggle to find the best companion between CNNs and RNNs algorithms to look for information.

Books + Other material Referred

- Research through open internet, news portals, white papers and imparted knowledge via live conferences & lectures.

- Lab and hands-on experience of @AILabPage (Self-taught learners group) members.

- NIPS 2016 Tutorial: GANs

- Generative Adversarial Networks

- Unsupervised Representation Learning with Deep Convolutional GAN

Feedback & Further Question

Do you need more details or have any questions on topics such as technology (including conventional architecture, machine learning, and deep learning), advanced data analysis (such as data science or big data), blockchain, theoretical physics, or photography? Please feel free to ask your question either by leaving a comment or by sending us an via email. I will do my utmost to offer a response that meets your needs and expectations.

======================== About the Author ===================

Read about Author at : About Me

Thank you all, for spending your time reading this post. Please share your opinion / comments / critics / agreements or disagreement. Remark for more details about posts, subjects and relevance please read the disclaimer.

FacebookPage ContactMe Twitter

============================================================

[…] Generative Adversarial Networks […]

[…] Semi-supervised Learning: This type of ml i.e. semi-supervised algorithms are the best candidates for the model building in the absence of labels for some data. So if data is mix of labels and non-labels then this can be the answer. Typically a small amount of labeled data with a large amount of unlabelled data is used here. […]

[…] Generative Adversarial Networks […]

[…] Generative Adversarial Networks […]

[…] data, computing power and algorithms to look for information. In the previous post we covered Generative Adversarial Networks. A family of artificial neural […]

[…] data, computing power and algorithms to look for information. In the previous post we covered Generative Adversarial Networks. A family of artificial neural […]

[…] Generative Adversarial Networks […]

[…] to look for information. How machine can do more then just translation this is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] https://vinodsblog.com/2018/11/23/generative-adversarial-networks-gans-the-basics-you-need-to-know/?… […]

[…] In the upcoming post, we will cover new type machine learning task under neural network Generative Adversarial Networks. A family of artificial neural […]

[…] computing power and algorithms to look for information. In the upcoming post, we will talk about Generative Adversarial Networks. A family of artificial neural networks which a threat and blessing to the physical currency […]

[…] computing power and algorithms to look for information. In the upcoming post, we will talk about Generative Adversarial Networks. A family of artificial neural networks which a threat and blessing to the physical currency […]

[…] in 2014. GANs are a class of unsupervised machine learning algorithm. In our previous post, “Deep Learning – Introduction to GANs”. I introduced the basic analogy, concept, and ideas behind “How GANs work”. This post […]

[…] computing power and algorithms to look for information. In the upcoming post, we will talk about Generative Adversarial Networks. A family of artificial neural networks which a threat and blessing to the physical currency […]

[…] data, computing power and algorithms to look for information. In the previous post, we covered Generative Adversarial Networks. A family of artificial neural […]

[…] to look for information. How machine can do more than just translation this is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] to look for information. How machine can do more than just translation this is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] data, computing power and algorithms to look for information. In the previous post, we covered Generative Adversarial Networks. A family of artificial neural […]

[…] to obtain accuracy or near perfection results. Still confused, it’s ok to read this post on “Generative Adversarial Networks“; you will find more details and […]

[…] Read More […]

[…] Read More Generative Adversarial Networks (GANs) – Combination of two neural networks which is a very effective generative model network, works simply opposite to others. The other neural network models take… […]

[…] Read More Generative Adversarial Networks (GANs) – Combination of two neural networks which is a very effective generative model network, works simply opposite to others. The other neural network models take… […]

[…] to look for information. How machine can do more than just translation this is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] Generative Adversarial Networks […]

[…] Generative Adversarial Networks […]

[…] to look for information. How machine can do more than just translation this is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] power of systems and algorithms to look for information. How machines can do more is covered in Generative Adversarial Networks. A family of artificial neural […]

[…] Generative Adversarial Networks […]

[…] NextDeep Learning – Introduction to Generative Adversarial Neural Networks […]

[…] computing power and algorithms to look for information. In the upcoming post, we will talk about Generative Adversarial Networks. A family of artificial neural networks which a threat and blessing to the physical currency […]

[…] computing power and algorithms to look for information. In the upcoming post, we will talk about Generative Adversarial Networks. A family of artificial neural networks which a threat and blessing to the physical currency […]

[…] data, computing power and algorithms to look for information. In the previous post, we covered Generative Adversarial Networks. A family of artificial neural […]

[…] computing power, and algorithms to look for information. In the upcoming post, we will talk about generative adversarial networks. A family of artificial neural networks that are both a threat and a blessing to the physical […]

[…] Generative Adversarial Networks […]

[…] programs are called Convolutional neural networks (CNNs), Recurrent neural networks (RNNs), and Generative adversarial networks (GANs). These networks are made to use the different types of information and ways of […]

You can use of example of generator creating fake currency where the discriminator cannot find whether the fake currency is real or fake after a period of time

That assertion may not be entirely accurate, as reputable agencies typically employ legitimate discriminators that rely on accurate data for training. While your desired outcome might be attainable, it could potentially lead to a self-contained misrepresentation.

Many thanks for such an excellent work. I am happy to get paid subscription of your site, I am very much into GNN and I agree 100% on your writeup here. I like the most this part “Generative Neural Networks (GNNs) are innovative AI models that excel at creating new data instances by learning the underlying patterns and structures from existing data. GNNs have applications in image synthesis, text generation, and other creative domains.”

[…] the realm of music, Generative Adversarial Networks have orchestrated a symphony of innovation. These networks can learn the nuances of musical […]

[…] Generative Adversarial Networks […]

[…] Generative Adversarial Networks […]

[…] Generative Adversarial Networks, are like the Batman and Joker of AI – two neural networks locked in a thrilling game of cat and mouse. One network, the generator, tries to create realistic data, while the other, the discriminator, tries to tell the difference between real and fake data. This competition pushes both to get better, resulting in stunningly realistic images, music, and more. When you see AI-generated art that takes your breath away or music that moves your soul, that’s often the work of GANs, making our world feel a bit more magical and full of endless possibilities. […]

[…] Introduction to Powerful Generative Adversarial Networks (GANs) […]